Three male rhesus macaques (Macaca mulatta) were used in this study. All procedures conformed to local and US National Institutes of Health guidelines, including the US National Institutes of Health Guide for the Care and Use of Laboratory Animals. All experiments were performed with the approval of the UC Berkeley Animal Care and Use Committee.

No statistical methods were used to predetermine sample size. The experiments were not randomized and investigators were not blinded to allocation during experiments and outcome assessment.

Visual stimuli

Face-patch localizer

The fMRI localizer stimulus contained five types of blocks, consisting of images of faces, hands, technological objects, vegetables and fruits, and bodies. Face blocks were presented in alternation with non-face blocks. Each block lasted 24 s (each image lasted 500 ms). In each run, the face block was repeated four times and each of the non-face blocks was shown once. A block of grid-scrambled noise patterns was presented between each stimulus block and at the beginning and end of each run. Each run lasted 408 s.

Stimuli for electrophysiology experiments

Human faces

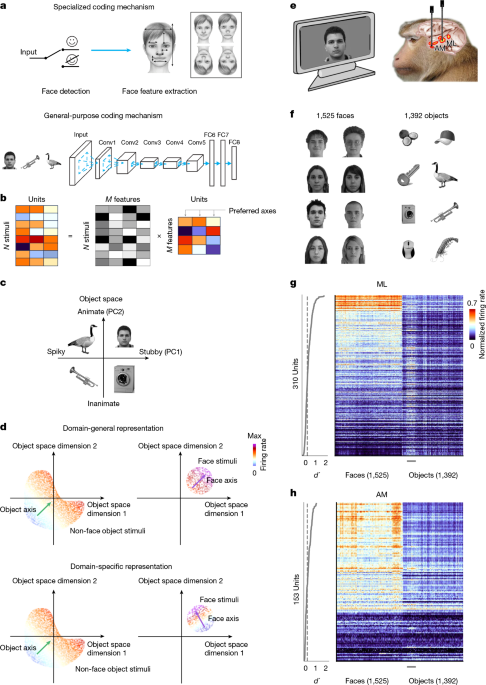

We acquired 2,000 frontal views of faces, as in ref. 42, from various face databases: FERET49,50; CVL (Peter Peer, CVL Face Database, Computer Vision Laboratory, University of Ljubljana, Slovenia; http://www.lrv.fri.uni-lj.si/facedb.html)48; MR2, ref. 51; Chicago Face Database52; CelebA53; FEI (fei.edu.br/~cet/facedatabase.html)47; PICS (pics.stir.ac.uk); Caltech Face Dataset 1999 (Caltech DATA, 2022; https://doi.org/10.22002/D1.20237); Essex (Face Recognition Data, University of Essex, UK); and MUCT (www.milbo.org/muct)54. The faces were aligned using facial landmarks as in ref. 42 with an open-source face aligner (github.com/jrosebr1/imutils).

For Extended Data Fig. 4c (right), we used synthetic face images from Syn-Vis-v055.

Non-face objects

We used 1,392 different images of isolated objects, previously used in ref. 6.

For monkey A, we presented a subset of 1,525 faces and 1,392 objects. For monkey J, we presented all 2,000 faces and 1,392 objects. For monkey M, we presented a subset of 1,050 faces and 1,392 objects. We presented a smaller number of faces to monkeys A and M to increase the number of repetitions per stimulus; all the analyses for monkey J used only the same subset of 1,525 faces that was presented to monkey A. For all experiments using monkey A and Extended Data Fig. 3q–s for monkey M, we showed the human faces and objects in separate blocks. In each block, we showed a training set (a random subset of 1,425 faces or 1,292 objects) for one repetition, followed by the test set (the remaining 100 faces or 100 objects) for three repetitions. The face and object blocks were interleaved and repeated until enough repetitions were acquired (around 10 repetitions per training image and 30 repetitions per test image). For all experiments using monkey J and Extended Data Fig. 3t–v for monkey M, stimuli were not separated into blocks; instead, images were drawn randomly from the combined pool of faces and objects. All images were rendered in greyscale and subtended 3.9° × 3.9° of visual angle.

Mixed image pool

To characterize neurons outside face patches, we presented a combination of six image sets: stubby objects (n = 600), spiky objects (n = 600), monkey bodies (n = 600), animals (n = 1,087), faces (n = 600) and other general objects (n = 1,593). The stubby, spiky, animal and general-object images were previously used in ref. 6. The monkey body images were sourced from Flickr (https://www.flickr.com/) and edited manually to mask visible faces. The face images were randomly selected from the human face set described above.

Non-human and ambiguous face-like stimuli

To probe responses to non-human and ambiguous face stimuli, we used monkey faces (n = 1,100, previously used in ref. 42), dog faces (n = 600, from the AFHQ (Animal Faces-HQ) dataset, https://www.kaggle.com/datasets/andrewmvd/animal-faces) and pareidolia images with matched controls (n = 400, from ref. 56).

The illustrative dog photograph in Extended Data Fig. 5l and the illustrative monkey-body photographs in Extended Data Fig. 6a,b were obtained from StockSnap (stocksnap.io; CC0).

Degraded faces

To test responses to degraded faces, we rendered occluded, noisy (blended with 50% spectrally equivalent noise) and Mooney versions of 200 images from the human face set described above.

Synthetic faces

We generated 2,000 synthetic faces using the face model introduced in ref. 3, which defines a 50D face feature space consisting of 25 shape and 25 appearance dimensions. To constrain variation along low-level features, we restricted the first five shape and first five appearance dimensions (a 10D low-level subspace) to five discrete vectors and generated 400 faces per low-level position. The remaining higher-dimensional features were allowed to vary freely, sampled from Gaussian distributions, ensuring that identity differences in this set were driven primarily by high-dimensional variation.

Face cell screening set

We presented a screening stimulus set consisting of six object categories (faces, bodies, hands, gadgets, fruits and scrambled patterns) with 16 identities each (Extended Data Fig. 1e–h).

Behavioural task

For electrophysiology experiments, monkeys were head-fixed and passively viewed a screen in a dark room. Stimuli were presented on an LCD monitor (Asus ROG Swift PG43UQ). Screen size measured 26.0° × 43.9° visual angle. Gaze position was monitored using an infrared-camera eye-tracking system (Eyelink, SR Research) sampled at 1,000 Hz.

All monkeys performed a passive fixation task for both fMRI scanning and electrophysiological recording. Juice reward was delivered every 2–4 s in return for monkeys maintaining fixation on a small spot (0.2° in diameter).

MRI scanning and analysis

Subjects were scanned in a 3T PRISMA (Siemens) magnet. First, anatomical scans were done using a single-loop coil at isotropic 0.5 mm resolution. Then functional scans were done using a custom eight-channel coil (MGH) at isotropic 1 mm resolution, while the monkeys performed a passive fixation task. Contrast agent (Molday ION) was injected to improve the signal-to-noise ratio. Further details about the scanning protocol can be found in ref. 28.

Analysis of functional volumes was done using the FreeSurfer Functional Analysis Stream57 and FSL58. Volumes were corrected for motion and undistorted based on the acquired field map. Runs in which the norm of the residuals of a quadratic fit of displacement during the run exceeded 5 mm and the maximum displacement exceeded 0.55 mm were discarded. The resulting data were analysed using a standard general linear model. The face contrast was computed as the average of all face blocks compared with the average of all non-face blocks.

Electrophysiological recording

We used Neuropixels 1.0 NHP (non-human primate) probes34 (probes 45 mm long with 4,416 contacts along the shaft, of which 384 are selectable at any time) to perform electrophysiology targeted to the face patches ML and AM. To insert the probes, we developed a custom insertion system composed of a linear rail bearing and 3D-printed fixture enabling a precise insertion trajectory. Face patches were initially targeted with single tungsten electrodes before the Neuropixels recordings, following methods for MRI-guided electrophysiology described previously59. All Neuropixels data were acquired using SpikeGLX or OpenEphys60 acquisition software and spike sorted using Kilosort 3 or 461,62 with the threshold parameter set to (10, 4).

We included data from monkey A for 13 sessions, from monkey J for one session and from monkey M for one session. Results across all sessions were consistent. Data shown in Figs. 1–5 and Extended Data Fig. 3a–p were from one session using monkey A; data shown in Extended Data Fig. 2 were from one session using monkey J; and data shown in Extended Data Fig. 3q–v were from one session using monkey M.

The percentages of face-selective cells recorded with Neuropixels probes are lower than those reported previously using single tungsten recordings15,26. This is probably because Neuropixels probes capture activity from many nearby neurons, including smaller or less well-isolated units, as well as neurons that are not strongly visually driven, and because portions of the probe often extended beyond the face-patch boundaries (Fig. 1g,h and Extended Data Fig. 1g,h).

Data analysis

Python v.3.10 and MATLAB R2023a were used for analysis. Only visually responsive cells were included for analysis. To determine visual responsiveness, a two-sided Student’s t-test was done comparing activity between −50 ms and 0 ms with that of 50–300 ms after the stimulus onset. Cells with P < 0.05 were included. Wherever further cell selection was done (for example, to cull cells whose activity could be well explained by an axis model, as determined by R2), it is indicated in the relevant section of the paper.

Here, we summarize these inclusion criteria. In Figs. 2–4, we analysed the same subset of cells that satisfied all the following criteria: significant visual responsiveness to our main stimulus set consisting of 1,525 faces and 1,392 objects; non-zero response variance across stimuli; peak d′ ≥ 0.2 within 80–140 ms (see ‘Face selectivity index’ section); and the presence of positive R2 on a held-out test set for both face and object axes, in both the general object space and the face space (see ‘AlexNet general-object space and face space’ section), at 80–140 ms. This resulted in 151 of 563 units in ML of monkey A, 76 of 248 units in AM of monkey A, 131 of 467 units in ML of monkey J, and 84 of 353 units in AM of monkey M. For Fig. 5, to include a larger population of cells for face reconstruction, we used a different inclusion criterion. Specifically, we included cells that were visually responsive to the face cell screening set (see ‘Visual stimuli’ section) and showed significantly different responses to faces versus non-face objects in that set (two-sided Student’s t-test, P < 0.05).

Face selectivity index

The face selectivity, d′, was defined for each cell and each 1 ms time bin as:

$$d_t^\prime =\fracE(r_\rmface,t)-E(r_\rmobject,t){\sqrt\frac12(\sigma _\rmface,t^2+\sigma _\rmobject,t^2)}$$

Where E(rt) and σt2 are the mean and variance of responses of the cell over stimuli at time t, respectively. We further calculated the peak d′ over a 20-ms sliding window between 80 ms and 140 ms (corresponding to the time interval in which we observed the main face-selective responses in raw time courses; Fig. 3d):

$$\mathrmpeak\ d^\prime =\mathop\textmax\limits_T\in [80,120]\,E_t\in [T,T+20](d_t^\prime ).$$

We then selected cells with peak d′ ≥ 0.2.

In Extended Data Fig. 1f–h, the face selectivity index (FSI) was defined for each cell as:

$$\rmFSI=\fracr_\rmface-r_\rmnon-\rmfacer_\rmface+r_\rmnon-\rmface$$

where r is the average neuronal response in a 50–220 ms window after stimulus onset.

Average response profile

Mean responses of each cell to each stimulus were computed in a 50–220 ms window after stimulus onset. Responses were then normalized for each cell to the range [0, 1], where the minimum response was assigned 0 and maximum was assigned 1.

AlexNet general-object space and face space

We used AlexNet32 to embed stimulus images into a high-dimensional latent space. Specifically, images were passed through a pretrained version of the model in MATLAB (Mathworks) and 4,096-dimension features were extracted from layer fc6. To further reduce dimensionality, we performed PCA. We built two feature spaces using this approach: a 60-dimension general-object space and a 60-dimension face space.

To build the 60-dimension general-object space, we performed PCA on fc6 responses to a set of 100 face images (randomly selected from the 2,000 faces in our face database) and 1,292 object images. This general-object space could explain 80.9% (61.6%) of the variance in the fc6 features of the object (face) stimuli. We normalized each dimension such that the projection of all stimuli along the dimension had a mean of 0 and an s.d. of 1. This general-object space was used for the analyses shown in Figs. 2 and 3 and Extended Data Figs. 2a–j, 3a–j, q–v, 4, 5a–e. For visualization purposes, we also used the 2D subspace consisting of the first two PCs of this space, as in ref. 6.

To build a 60-dimension face space capturing variation in face-specific features, we performed PCA on fc6 responses to a set of 1,425 face images. The face space explained 86.4% of the variance in the fc6 features of the face stimuli. This face space was used for the analyses shown in Fig. 4 and Extended Data Figs. 2k–p, 3k–p and 9a–c.

To build a 60-dimension face feature space using a ResNet-50 model trained on the VGGFace2 dataset36, we used a PyTorch implementation of the VGGFace2 model available at https://github.com/cydonia999/VGGFace2-pytorch. We extracted responses from the final fully connected (fc) layer to a set of 1,425 face images and performed PCA. The resulting 60 principal components defined the face feature space used in the analyses shown in Extended Data Fig. 8f–i.

Preferred axis of cells

The preferred axis of each cell was computed using linear regression as follows:

$$P_\rml\rmi\rmn=(\bfr-\barr)F(F^TF)^-1$$

where r is a 1 × n vector of the firing-rate response to a set of n face stimuli, \(\barr\) is the mean firing rate and F is an n × d matrix, where each row consists of the d parameters representing each image in the feature space. As mentioned above in ‘Stimuli for electrophysiology experiments’, we split the full image set into training and test sets, and linear regression models were trained on the training set and tested on the held-out test set. In all figures, R2 for the held-out test set is shown.

Stimulus distribution control

To evaluate whether the shape of our stimulus distribution had any effect on the axis directions (Extended Data Fig. 4d–i), we fitted multivariate Gaussian distributions to the face and object training sets, and then identified subsets of 500 faces and 500 objects with the highest probability density under the face and object Gaussian distributions, respectively.

Cross prediction between face and object axes

To evaluate how well the face axis generalizes to object responses and vice versa, we trained a linear regression model with the training set for faces (objects) and then tested on the test set for objects (faces) (Fig. 2c and Extended Data Figs. 2c, 3c and 4l).

Normalized face–object axis correlation

To quantify the correlation between the face and object axes of each cell while accounting for the out-of-distribution effect, we first measured the within-category axis correlation by calculating the correlation between axes estimated from two random subsets of images from the same category. We then used the mean of the face subset–face subset correlation and the object subset–object subset correlation for each cell as the upper bound imposed by the out-of-distribution effect. Finally, we calculated the correlation between the face and object axes of each cell and divided this raw correlation by the upper bound to obtain the normalized face–object axis correlation (Extended Data Figs. 2d, 3d and 4m).

Artificial-unit comparison

To observe how a unit with a single axis across both face and object space would behave, we identified artificial face-selective units from the AlexNet fc6 layer and redid our analyses for real neural units on them (Extended Data Fig. 4j–m). Because the general-object space was built by PCA from the activities of AlexNet fc6-layer unit activities, a unit in the same layer should respond linearly to the features in the general-object space, thus providing a model neuron with a single encoding axis. Given that there are features not perfectly explained by the feature space, the artificial units should preserve effects from the out-of-distribution generalization. We first identified face-selective AlexNet fc6 units by calculating the FSI for each unit using its response to the face cell screening set. We then repeated the analyses of Fig. 2a–c using the responses of the 126 most face-selective units (FSI ranging from 0.23 to 0.46).

Time-varying axis analysis

We fitted axes to the average neural responses in 20-ms windows over the trial duration of 0–300 ms after stimulus onset (Figs. 3 and 4 and the related Extended Data figures). To quantify the alignment between axes at different latencies, we computed cosine similarity between the time-varying face or object axis at each latency and the trial-wide object axis computed across 50–220 ms (Fig. 3e and Extended Data Figs. 2h, 3h and 8a–e, rightmost column).

Population response sparseness

To characterize the sparseness of face-cell population responses to faces and objects, we computed a modified Treves-Rolls population sparseness63. Specifically, we calculated

$$S_i,t=1-\frac\left(\sum _j=1^Nr_j,i,t\right)^2N\sum _j=1^Nr_j,i,t^2$$

where N is the number of neurons and rj,i,t is the response of neuron j to stimulus i at time t, for all stimuli and times. To compare population response sparseness to faces and objects, we took the mean over all face and all object stimuli, respectively, at each time point.

Identifying top- and bottom-projected images for response PC1 and PC2

We performed PCA on the time-averaged population response matrix across all stimuli and treated the first two PCs (PC1 and PC2) as pseudo-units. For each response PC, we computed its face and object axes using a 20-ms sliding time window. We then projected all the stimuli onto each axis and identified the top five and bottom five images based on projection values, representing the most and least preferred stimuli for each axis over time.

Single-stimulus axis-change score

To assess whether an individual face image elicits a change in the population code, we avoided any per-cell normalization and worked with raw firing rates. Because large between-cell firing rate differences can mask population rank-order changes, we excluded extreme-firing-rate cells before analysis.

For each image, we computed the Pearson correlation between the population response vectors at an early (60–80 ms) and a late (100–120 ms) window across all face-selective cells. We then mapped the correlation values to face probabilities using a simple single-feature logistic regression classifier trained to discriminate between face and object labels from the correlations. The classifier output is reported as the single-stimulus axis-change score: higher values indicate a greater ‘faceness’ of an image (more negative early-versus-late population correlation).

Recording outside face patches and quantifying image selectivity

We used fMRI localizers6 to target the middle stubby, spiky and body patches in the monkeys as in ref. 6. Neuropixels probes were inserted into these regions and neural activity was recorded while the monkeys viewed a mixed image pool (see ‘Visual stimuli’ section). For each neuron, we quantified discriminability (peak d′) for its most responsive stimulus category using the same procedure described above for quantifying face selectivity. Neurons were pooled across sessions for subsequent analyses (Extended Data Fig. 6).

Computing axis correlation between short and long latencies

To quantify changes in face and object axes (Fig. 4 and related Extended Data figures), we computed the cosine similarity between the face axes at three different latencies (80−100 ms, 120–140 ms and 160–180 ms), and similarly for object axes. We chose to focus on these three time windows because they straddled the population response peak of face cells (Fig. 3d). We note that individual cells sometimes showed salient changes in response to objects over time; our analyses and conclusions are intended to capture population-wide changes in tuning.

In Fig. 4c,d, to better account for different latencies in responses of different units to faces and objects (see the example cell in Fig. 3f, top), for each unit, we took the short latency to be the first 20-ms window in which the mean response of that unit was at least 2 s.d. above the mean baseline (−25–25 ms) response of that unit to faces and objects, respectively.

Control analysis to rule out cell-intrinsic axis-change hypotheses

In hypothetical scenario one, axis dynamics is always accompanied by a high mean response magnitude (Extended Data Fig. 7a). To address this, we identified the 100 most-effective non-face stimuli, as well as the 100 least-effective face stimuli (Extended Data Fig. 7b). The former evoked a greater mean response, averaged over 50–220 ms, than the latter (Extended Data Fig. 7c; mean response ratio = 1.29). However, the two types of stimuli evoked very different response dynamics (Extended Data Fig. 7d), and comparison of axis weights across different time windows revealed axis change only in response to the face stimuli (Extended Data Fig. 7e–i). This shows that the presence of discriminable face features is necessary to trigger axis change; high mean response magnitude is insufficient.

In hypothetical scenario two, axis-change dynamics could be due to delayed responses to weaker stimuli leading to a change in tuning (Extended Data Fig. 7j). Specifically, if weaker responses are more delayed, then at longer latencies, stronger responses may already have subsided while weaker responses persist. This temporal offset could result in weaker responses appearing stronger than the diminished stronger responses, thereby giving the phenomenon of a reversed tuning profile. To address this, we first identified the 50% most-effective and 50% least-effective face stimuli for each cell, determined by the mean response in the early time window 80–100 ms. We then computed face axes separately using these two groups of stimuli, at both short (80–100 ms) and long (120–140 ms) latency. If axis-change dynamics were driven solely by delayed onset of weak stimuli, then one would predict: a lack of correlation between axes computed using the most-effective and least-effective faces at long latency; and positive correlation between axes at short and long latency computed using the most-effective faces (determined by response at short latency). Neither of these predictions was supported by the data (Extended Data Fig. 7k,l). In particular, the negative correlation that we observed experimentally between axes computed using the most-effective faces at short and long latency rules out the possibility that axis reversal is driven solely by responses to non-optimal, low-contrast stimuli (Extended Data Fig. 7l).

In hypothetical scenario three, axis-change dynamics could be due to a cell-intrinsic increase in firing threshold following a strong transient response (adaptation; Extended Data Fig. 7m). To investigate this possibility, we measured the axis tuning of model cells encoding a single axis with and without application of a raised threshold. We found that the resulting axes were still highly correlated (Extended Data Fig. 7n). This contrasts with our actual results (Fig. 4a–d), ruling out cell-intrinsic adaptation as the source of the change in neural code.

Generating low and high spatial frequency-filtered images

Each image from our original set of 1,525 face images was first convolved with a Gaussian filter (σ = 0.044°) to generate a low spatial frequency-filtered image. This was then subtracted from the original to compute a high spatial frequency image (Extended Data Fig. 7o–v).

Identifying new tuning directions for each cell

In Fig. 4c,d, to better identify new tuning directions that emerge at long latency (Fig. 4g–k and related Extended Data figures), we compared the face axes of each cell at 80–100 ms, denoted as ν1, to the axes at 120–140 ms, denoted as ν2 (AM time windows were delayed by 20 ms). We decomposed ν2 =νǁ + ν⊥, where νǁ = ⟨ν1, ν2⟩(ν1/|ν1|2) is the component of ν2 parallel or antiparallel to ν1, and ν⊥, the component of ν2 orthogonal to ν1, is given by ν⊥ = ν2 − νǁ. For visualizations in Fig. 4h–k, we computed the principal orthogonal direction for each ν⊥. Specifically, for each stimulus with embedding u in the face space, we orthogonalized u with respect to ν⊥ by computing u⊥ = u − ⟨u, ν⊥⟩(ν⊥/|ν⊥|2). Then we performed PCA over all u⊥ and took the first PC as the principal orthogonal direction to ν⊥.

Face reconstruction

To reconstruct faces from neural activity (Fig. 5 and Extended Data Fig. 2q,r), we leveraged a probabilistic generative model64. The model followed the design of variational autoencoders65, with an encoder mapping the input images (resized to 128 × 128) into latent features defined with a variational distribution and a decoder projecting the features to the original inputs. The encoder and decoder each consisted of five convolutional layers, and the latent features were set to 512 dimensions. A regularizer was used to minimize discrepancies between the latent distribution and a Gaussian prior, which better supports data on low-dimensional manifolds. We also included an additional objective to align the latent features (after linear projection) with fc6 features from AlexNet, to ensure that the latent space described similar features to the general-object space. We trained the generative model on 1,900 faces and 1,292 objects, and validated the model on the 200 held-out images (100 faces and 100 objects).

To compare the reconstructed faces from neural responses at short and long latency, we used averaged population responses from several time windows. For ML cells, short-latency responses were averaged from 50–75 ms and 75–100 ms, and long-latency responses from 120–145 ms and 145–170 ms. We also used a combined window composed of windows from 62–87 ms and 132–157 ms. Thus, each of the three windows comprised two sub-windows. Note that the three windows each spanned exactly the same duration of time. The AM time windows were delayed by 20 ms. For each window, we treated responses from the two sub-windows as if they were from distinct cells, enabling the decoder to assign distinct axes to each sub-window (Fig. 5a).

For reconstruction, after training and freezing the generative model, we linearly decoded 512-dimension feature vectors from neural responses to each image using responses from the short, long and combined time windows (thus three separate linear decoders were trained). We then fed the latent features into the decoder of the probabilistic generative model to reconstruct faces. For learning linear decoders mapping neural activity to latent features, we trained on 1,425 face images (a subset of the 1,900 images used for generative model training) and tested on the remaining 100.

We also passed each original face image through the encoder and decoder of the generative model directly to obtain the best possible reconstruction of each face, allowing us to separate fine face-feature loss in the generative model itself from decoding inaccuracy due to noise in the neural signal.

Visualizing new tuning directions

To visualize new tuning directions of cells (ν⊥; Fig. 4h–k), for each cell we reconstructed a series of faces with features that gradually varied along ν⊥, leveraging the probabilistic generative model described above (‘Face reconstruction’ section). Because ν⊥ lies in our 60-dimension face space, we also trained a linear transformation matrix to transform features from this 60-dimension face space to the generative model’s latent space (512 dimensions). We first normalized each cell’s axis to unit length and then sampled seven locations along it, at projection lengths of [−4, −2, −1, 0, 1, 2, 4] σ, where σ is the s.d. of the projections of our 1,425 original faces onto ν⊥. This yielded seven 60-dimension feature vectors, which we then transformed to 512 dimensions. Finally, we fed these 512-dimension features into the generative model to generate the reconstructed faces.

Using a DNN to quantify face identification performance

To quantify the goodness of reconstruction (beyond human visual evaluation; Fig. 5c,d), we leveraged a DNN pretrained to discriminate faces38 (using the VGGFace2 dataset36), whose feature space provides an objective metric for evaluating similarity between faces. We embedded all our neural-reconstructed faces and best possible reconstructed faces into the feature space of this DNN and then calculated the Euclidean distance between each face’s neural reconstruction and its best possible counterpart.

To compare the goodness of reconstruction among the three time windows, in Fig. 5c,d, we compared the distance of reconstructions from the three time windows to the best possible reconstruction for each face. The closest face of the three was considered to be ‘correct’. We repeated this recognition task for all 1,525 faces. We further repeated this whole process using only subsets of the neurons to investigate how the number of cells affects the reconstruction quality for different time windows. For each cell count, we randomly resampled the cells 30 times to estimate the s.e.m.

Simulating results of face reconstruction analyses

We simulated a neural population whose short-latency responses tuned to a smaller set of face dimensions and whose long-latency responses tuned to a larger set of face dimensions, to test our hypothesis that the results of Fig. 5d might be explained by a trade-off between redundancy and diversity.

Simulated neuron responses were created by linearly combining different dimensions of the latent space features of the DNN38 mentioned above. We first performed PCA on this DNN’s latent space to get a 60-dimension feature for each image. Then, for simulating short-latency responses of each artificial neuron, we linearly combined a random subset of the first five dimensions of the features and applied Gaussian noise. For long-latency responses, we linearly combined a random subset of dimensions from the full 60 dimensions and applied Gaussian noise. These simulated neurons were then treated as real neurons and underwent the face reconstruction and recognition analyses described above.

Face categorization and discrimination time course

For both face categorization (face versus object) and face identity discrimination, we summarized how well the two categories or single identities are separated in population space. In each time window, let \(\bfr_i\in \bfr^N\) be the population response vector (across N units) to face image \(i\) (distinct identity). We computed all pairwise Euclidean distances \(d_ij=\bfr_i-\bfr_j_2\) across categories or across identities and reported a population separation index (PSI):

$$\rmPSI=\frac\rmmean_i < jd_ij\sigma _\bfr$$

where σr is the pooled s.d. of responses across units and identities in the window. PSI is unitless and increases when category- or identity-specific responses are more dispersed relative to the overall response variability. We repeated this computation for each 20-ms window to obtain the categorization and discrimination time courses (Extended Data Fig. 9d,f).

Recurrent neural network training

We trained a recurrent neural network with 100 units whose dynamics at each timestep were described by

$$\bfh_t=\tanh (\bfx_t+W\bfh_t-1)$$

where ht is the vector of unit activations at time t, W is the weight matrix and xt is the external input at time t. During training, the network was run for two timesteps with the same random linear gradient provided as input at both timesteps, and the mean squared error between the output at time 2 and the reversed input gradient was used as the loss. The network was trained for 10,000 iterations, each with a random linear gradient, using the Adam optimizer (learning rate 0.001).

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.