Ethics statement

De-identified tissue samples were collected at Stanford University School of Medicine from elective termination of pregnancy procedures with informed consent for the research use of tissues in observance of relevant legal and institutional ethical regulations. No demographic information was collected. Consent was obtained by the medical team. The relevant tissue sample processing and analyses were performed under protocol SCRO-796, approved by the Stem Cell Research Oversight Panel (SCRO) at Stanford.

Sample collection and nuclei isolation

Tissue samples were delivered on ice and immediately stored in liquid nitrogen prior to processing. A multi-tissue compatible nuclei isolation protocol was developed to efficiently isolate stable nuclei for further library preparation. In brief, for a given sample, 100–200 mg of tissue was added directly into 1 ml of Nuclei Extraction Buffer (250 mM Sucrose, 25 mM KCl, 5 mM MgCl2, 20 mM HEPES-KOH, 65 mM β-glycerol, 0.5% IGEPAL CA-630, 1× protease inhibitor, 1 mM DTT, 0.2 mM Spermine, 0.5 mM Spermidine, 60 U ml−1 RNasin Plus, 2–5% normal goat serum) in a chilled 2 ml dounce homogenizer (Kimble 885300-0002) on ice. The sample was incubated for 10 min on ice. The sample was dounced 20 times each with pestle A then with pestle B. Sample was transferred to a DNA low binding tube. Three hundred µl additional Nuclei Extraction Buffer was used to rinse any remaining nuclei from dounce homogenizer. Sample was incubated with vertical rotation for 5 min at 4 °C. Sample was filtered using a 70-µm Flowmi strainer. Volume was adjusted with additional Nuclei Extraction Buffer to 1.2 ml total volume. Thirty-seven per cent formaldehyde was added to the sample for a 0.2% final formaldehyde concentration and incubated for 4 min at room temperature with vertical rotation. Fixation was quenched with 125 mM glycine for 8 min at room temperature with vertical rotation. Nuclei Extraction Buffer was added to the sample for a final volume of 1.4 ml. An iodixanol gradient was prepared to enrich nuclei from homogenate. In brief, 50% iodixanol solution was prepared from 60% iodixanol with the addition of 1 mM DTT, 60 U ml−1 RNasin Plus, and 2–5% normal goat serum. The sample was mixed with an appropriate amount of iodixanol for a final 22% iodixanol concentration. 44% iodixanol solution was layered below the sample. Then, a 22% iodixanol solution was gently added between the sample and the 44% iodixanol solution layer. The sample was centrifuged at 3,500g for 30 min at 4 °C with brakes off. The nuclei layer was separated with gentle pipetting for further processing.

SHARE-seq library preparation

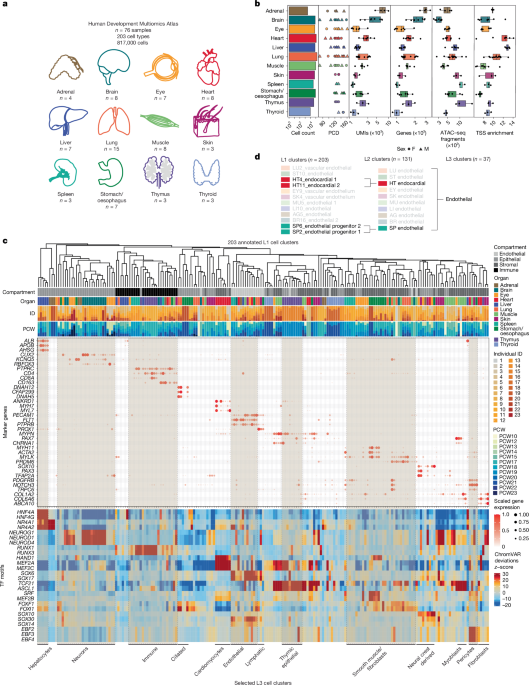

The full protocol is described in Supplementary Note 1, adapted from published SHARE-seq protocols21,91. In total, we processed 76 tissue samples derived from 23 individuals, across 12 tissue processing and SHARE-seq library preparation batches, where each batch corresponded to all samples of a given organ.

Library sequencing

All DNA libraries were sequenced on a NovaSeq 6000 using 300-cycle S4 v1.5 reagent kits with XP workflow. Paired-end sequencing was run with a 96-99-8-96 configuration (Read1-Index1-Index2-Read2). We quantified DNA libraries using Qubit and Tapestation, then prepared library pools at 1.5 nM concentration for a final loading concentration of 300 pM. Sequencing was performed at the Stanford Genome Technology Center.

VISTA embryo histology

We received X-Gal-stained and fixed whole mouse embryos in PBS from L. Pennachio26,27 and transferred them to 70% ethanol for storage. Paraffin embedding was performed by Histo-Tec Laboratory using a xylene-free dehydration protocol as xylene could dissolve the X-Gal stain. In brief, the embryos were sequentially dehydrated with 80%, 95%, 100%, 100% and 100% ethanol for 20 min each, followed by washes with 50:50, 80:20, 90:10 and 100:0 paraffin:alcohol mix for 20 min each to remove the ethanol. Subsequent embedding and H&E staining was performed with standard protocols on 5-μm sections.

SHARE-seq data pre-processing

We developed a highly parallelized, rapid, and storage-efficient pre-processing Snakemake (v7.15.1)92 pipeline to convert BCL files from sequencers to ATAC fragment files and RNA sparse matrices (Extended Data Fig. 1). In brief, raw BCL files were first converted to FASTQ files using a custom script that parallelizes the bcl2fastq (v2.20.0.422, Illumina) conversion by flow cell tiles, parses the read cycles, and demultiplexes the raw FASTQ files into sublibraries based on sublibrary barcodes in the Index2 reads. For each sublibrary, we further split the FASTQ file into random chunks of 20 million reads.

Within each chunk of an ATAC–seq sublibrary, we performed barcode matching against the SHARE-seq barcode whitelist, allowing for 1 bp mismatch for each of the three rounds of 8 bp barcodes that make up a single-cell barcode, followed by Nextera adapter trimming with fastp (v0.23.2)93, genome alignment with Bowtie2 (v2.5.0)94, and conversion of the output BAM file to a more storage-efficient fragment file. We then merged the fragment files from all chunks of a sublibrary, deduplicated fragments per cell based on start and end coordinates, and demultiplexed the fragments into samples based on round 1 cell barcodes. Finally, for each sample, we merged the demultiplexed fragment files for that sample across all sublibraries to generate the final ATAC–seq fragment files (*.fragments.tsv.gz, *.fragments.tsv.gz.tbi).

Within each chunk of an RNA sublibrary, we performed barcode matching, 10 bp UMI parsing from Read2, and adapter trimming for Read1 only, followed by genome alignment with STAR (v2.5.4b)95, gene annotation with featureCounts (v2.0.1)96, and conversion of the output BAM file to a more storage-efficient TSV format. We then merged the annotated TSV files from all chunks of a sublibrary, split into 12 barcode chunks based on round 3 barcodes, deduplicated UMIs per cell per annotated gene per barcode chunk using UMI-tools (v1.1.2)97, demultiplexed the deduplicated TSV files into samples based on round 1 cell barcodes, and converted the TSV files into the Matrix Market Exchange format. Finally, for each sample, we merged the demultiplexed Matrix Market Exchange files for that sample across all sublibraries to generate the final RNA sparse matrix files (*.matrix.mtx.gz, *.features.tsv.gz, *.barcodes.tsv.gz).

On average, we can process a 10B-read NovaSeq run in under 4 h using an academic high performance computing cluster. This pipeline can be easily adapted to process other split-and-pool-based single-cell multiomic data. All libraries were aligned to the hg38 reference genome. The pipeline is available at https://github.com/GreenleafLab/shareseq-pipeline (stable release v1.0.0). Raw sequencing reads have been anonymized using BAMboozle98 prior to public deposition to protect donor privacy. The anonymization code is available on the ‘anonymize’ branch of the shareseq-pipeline GitHub repository.

It is well known that in ATAC–seq experiments, Tn5 transposase forms a homodimer with a 9-bp gap between the two Tn5 molecules, resulting in two insertions 9 bp apart on different DNA strands per accessible site4,99. When sequencing the DNA fragments using paired-end sequencing, the start and end positions need to be adjusted based on the insertion offset of Tn5 to reflect the true centre of the accessible site at the midpoint of the Tn5 dimer. To account for the Tn5 offset, previous ATAC–seq studies used a + 4/−5 offset approach where plus-stranded insertions are adjusted by +4 bp, and minus-stranded insertions by −5 bp. However, this in fact results in a 1 bp mismatch of the adjusted insertion sites between the two fragments sharing a single transposition event (Extended Data Fig. 1b). The discrepancy may stem from the end-exclusive coordinate system used by BAM and BED files, as the original +4/−5 convention is only correct if the output file is interpreted in a non-standard 0-based, end-inclusive genomic coordinate system. This mismatch does not affect most downstream ATAC–seq analysis that bins insertions on the scale of hundreds of base pairs, but it does affect base pair-sensitive analysis such as transcription factor footprinting and motif analysis. In this SHARE-seq pre-processing pipeline, we have adopted the +4/−4 offset instead, which results in a consensus insertion site. See example motifs generated from reads corrected by either of these offset schemes in supplementary figure 3a in ref. 17.

SHARE-seq data QC and filtering

We performed per-sample quality control (QC) filtering by manually inspecting and thresholding the following metrics: (1) TSS enrichment ratio and number of fragments for ATAC–seq fragment files; (2) number of UMIs, number of genes and percentage of mitochondrial reads for RNA sparse matrices; (3) ratio of RNA UMIs versus ATAC–seq fragments to remove cells with low quality in one modality (Extended Data Fig. 2a). All sample filtering thresholds are summarized in Supplementary Table 1. No explicit batch effect correction was performed, as individual-specific effects are often confounded with temporal differences in cell type composition, making it challenging to separate these sources of variation.

RNA normalization, ambient RNA removal, dimensionality reduction and clustering

We used Seurat (v4.3.0)100 in R (v4.1.2) to process filtered RNA sparse matrices into Seurat objects per organ100. We adopted an iterative dimensionality reduction and clustering workflow to sequentially annotate cell types and filter out additional low-quality clusters (Extended Data Fig. 2a). For each iteration, we first performed SCTransform v2 and variable feature selection on RNA raw counts of each sample, then selected the top 3,000 consensus variable features across samples using the SelectIntegrationFeatures function from Seurat, excluding mitochondrial genes, sex chromosomes genes, and cell cycle genes to minimize batch effects. We merged the raw RNA counts from per-sample objects into a single matrix, performed SCTransform v2 using consensus features, and used the DecontX function from the celda (v1.6.1) package101 on SCT-corrected counts to remove ambient RNA contamination per cell. The decontaminated counts were then split by sample, scaled to 10,000 UMIs per cell and log-normalized. Similar to the process mentioned above, we selected a list of top 3,000 consensus variable features from the per-sample variable features. Principal components analysis was performed on the merged object with the consensus features, followed by cell clustering using the Louvain algorithm at a resolution of 0.3 with 50 principal components and uniform manifold approximation and projection (UMAP) embedding. We then inspected each cluster and removed any low-quality clusters with significantly lower UMIs than other clusters, high levels of co-expression for different tissue compartment markers that are biologically impossible and suggestive of doublets (for example, high expression for both epithelial and endothelial compartment markers), or no clear cell type-defining marker genes. After removing cells in the low-quality clusters, we repeated the processing steps starting from RNA raw counts for each sample. This process was repeated until no more low-quality clusters were identified, which usually required one to three iterations. Cells in the final set of clusters passing this iterative QC were considered ‘whitelisted’. For each cell type cluster, marker genes were identified in a one-versus-all Wilcoxon rank-sum test versus all other clusters from the same organ, and filtered to obtain genes with a log2 fold change greater than 1.

All final cluster annotations are included as Supplementary Table 2. All cluster markers are summarized in Supplementary Table 3 and a subset is visualized in marker gene dot plots in Supplementary Note 2. All UMAP embeddings are included in Supplementary Note 2.

ATAC–seq peak calling, motif enrichment and chromVAR

We used ArchR (v1.0.2)102 to process filtered ATAC fragment files into ArchR projects per organ. After filtering to the final whitelisted cell barcodes from the iterative RNA processing workflow and transferring the clustering and cell type annotations, we called peaks per cluster using Macs2 (v2.2.7.1)103, merged peaks into a single reproducible peak set per organ using ArchR’s iterative overlap strategy, and created a cell-by-peak matrix of fragment counts. We identified marker peaks per cluster using a Wilcoxon rank-sum test and performed transcription factor motif enrichment within the marker peaks with a cutoff of FDR ≤ 0.1 and log2(fold change) ≥ 0.5. We calculated chromVAR22 motif deviations across all clusters within each organ22. For both of these analyses, we used a curated cisBP motif set of 1,141 unique human transcription factor motifs described in refs. 104,105. We created a global ArchR project by merging all 12 per-organ ArchR projects and an HDMA global caCRE set by iteratively overlapping peak sets called from individual clusters across all organs102,106.

Linkage of regulatory elements to genes with modified ABC model

We used the ABC approach to link caCREs to gene promoters. To ensure consistency of ABC enhancer regions as those in our HDMA global caCREs set, we adapted ABC24 to enable custom regions as inputs to the model. To create the custom region set, we used bedtools to merge the HDMA global caCREs set with the hg38 genome TSS set. Following the recommended scATAC workflow from the official ABC documentation (https://abc-enhancer-gene-prediction.readthedocs.io/en/latest/index.html), we used the pseudobulk ATAC–seq signal (without H3K27ac chromatin immunoprecipitation with sequencing (ChIP–seq)) as enhancer activity and estimated 3D contact frequency between enhancers and promoters using a power law function of genomic distance. ABC was run on each L1 cell cluster. The results were filtered to obtain enhancer–promoter links with an ABC score greater than 0.013, which corresponds to a 70% recall rate from the benchmark CRISPR dataset. Our modified ABC workflow is available at: https://github.com/GreenleafLab/ABC-Enhancer-Gene-Prediction-CustomRegions (commit b3d2156).

VISTA enhancer analysis

We filtered the results to obtain VISTA-validated enhancers (accessed 24 January 2024) that originated from humans or have a human sequence homologue and annotated as X-Gal positive in any organs present in HDMA, overlapped with HDMA global caCREs, and retained VISTA enhancers with a minimum of 75% (375 bp) overlap. If multiple caCREs overlapped the same VISTA enhancer, we chose the caCRE with the highest ATAC–seq signal, based on previous observations that VISTA enhancers often have a much smaller core element and enhancer activity does not depend on all regulatory elements within an enhancer107. ATAC–seq signal was scaled and log-normalized per L1 cluster then z-scored across clusters per enhancer. For each organ, a one-sided Wilcoxon rank-sum test was performed to calculate the statistical significance of the HDMA ATAC–seq signal enrichment in caCREs overlapping VISTA enhancers annotated as positive in that organ. For example, to test the significance of brain ATAC–seq signal enrichment, we first subsetted to HDMA brain clusters only, then compared ATAC–seq signal in caCREs overlapping VISTA brain-positive enhancers and caCREs overlapping VISTA brain-negative enhancers using a one-sided Wilcoxon rank-sum test. The effect size was calculated as the W statistic/(n1 × n2), where n1 is the number of caCREs in the brain-positive group and n2 is the number of caCREs in the brain-negative group. This effect size represents the AUROC probability that a given caCRE in the brain-positive group will have higher ATAC signal than a caCRE in the brain-negative group. Similarly, for the RNA data, we first identified the nearest gene for each caCRE overlapping a VISTA enhancer, scaled and log-normalized the raw RNA expression counts per L1 cluster, and then z-scored expression values across clusters per enhancer. An analogous Wilcoxon rank-sum test was performed for each organ to assess the statistical significance of the HDMA RNA signal enrichment in VISTA positive enhancers. To avoid numerical underflow to zero at machine precision in Wilcoxon rank-sum tests, P values were calculated on the log10 scale and reported accordingly.

Preparation of input regions for ChromBPNet models

To define genomic regions for training ChromBPNet models, we performed a second round of peak calling to obtain a lenient set of accessible regions. First, pseudobulk fragment files for each of the 203 L1 cell type clusters were generated by concatenating fragments from the SHARE-seq ATAC–seq modality for all cells in that cluster, from all samples. For each pseudobulk, we then derived pseudoreplicates. For each fragment, starts and ends (corresponding to Tn5 insertion sites) were randomly allocated to each of two pseudoreplicate files, and pseudoreplicate files were also concatenated into a total-pseudoreplicate file. Macs2 (v2.2.9.1) was used to call peaks on all three pseudoreplicate files with parameters: -p 0.01–shift −75–extsize 150–nomodel -B–SPMR–keep-dup all–call-summits. Only peaks called on the total-pseudoreplicate which overlapped peaks called in both pseudoreplicates were retained. Peaks overlapping the GRCh38 ENCODE blacklist (ENCODE accession ENCFF356LFX) were excluded. Peak coordinates were adjusted to 1,000 bp centred at the Macs2 peak summit. Pseudoreplicates were only used for peak calling, and pseudobulk fragment files were used for downstream model training.

We used the ChromBPNet package (https://github.com/kundajelab/chrombpnet, commit a5c231) and followed the workflow described17. We used the command chrombpnet prep nonpeaks to define background regions that match the GC content of peak regions. For each cell type, we used a fivefold cross-validation scheme, where each fold (designated 0 to 4) comprised a different set of training, validation, and test chromosomes, with each chromosome in the test set of at least one fold. We used the default human chromosome folds provided with ChromBPNet108.

ChromBPNet model training

ChromBPNet models are supervised convolutional neural networks trained to use 2,114-bp one-hot-encoded DNA sequence in peaks and background regions to predict the accessibility profile (as a probability distribution) and total natural log counts (as a scalar value) in the central 1,000-bp window of input regions. ChromBPNet models use a pre-trained bias model, and then explain the residual accessibility not captured by Tn5 enzyme bias. We trained a bias model to learn the enzymatic bias in the SHARE-seq setting using fold 0 for the heart L1 cluster 0 pseudobulk, with bias threshold factor -b 0.4 using the chrombpnet bias pipeline which also performs model interpretation using DeepLIFT. We confirmed that the bias model learned the Tn5 motifs but not transcription factor motifs, and used this bias model to subsequently train ChromBPNet models for five folds for each of the 203 L1 cell types (1,015 models total) using the chrombpnet pipeline command with the GRCh38 reference genome from ENCODE (fasta: https://www.encodeproject.org/files/GRCh38_no_alt_analysis_set_GCA_000001405.15/, with chromosome sizes from ENCODE accession ENCFF667IGK).

ChromBPNet model evaluation

Models were evaluated based on the Pearson and Spearman correlations between predicted and observed log counts in peaks and the Jensen–Shannon distance between predicted and observed profiles in peaks, for peaks on held-out test-set chromosomes (Supplementary Table 5 and Supplementary Note 2). Models for 4 cell types where the Spearman correlation for any fold was less than 0.5, generally corresponding to pseudobulks with low coverage, were excluded. To generate the average predicted accessibility tracks across folds for peak regions (representing counts per base), for each region, the mean predicted profile logits across folds were processed with the softmax function to convert them to probabilities, then scaled by the exponentiated mean predicted log counts across folds.

ChromBPNet model interpretation

We performed model interpretation to determine the extent to which each nucleotide was predictive for accessibility. We ran the chrombpnet interpret command which uses the DeepLIFT30 algorithm to compute contribution scores for each nucleotide in the 2,114-bp input windows with respect to the predicted counts. Contribution scores were derived for each model fold for all peak regions, and the mean computed across folds. The averaged predicted accessibility profiles and contribution scores were converted to bigWig files, as well as used for all analyses and figures.

De novo motif discovery and assembly of the motif lexicon

Assembly of the de novo motif lexicon required three steps: (1) de novo motif discovery per cell type; (2) collapsing similar motifs across cell types; and (3) motif annotation.

-

1)

First, for de novo motif discovery on sequences driving chromatin accessibility, we used TF-MoDISco31, which, in brief, identifies seqlets, corresponding to short spans of contiguous high positive-importance or high negative-importance nucleotides, and clusters them into recurrent 30 bp patterns. We used the implementation in the TF-MoDISco-lite package (https://github.com/kundajelab/tfmodisco, v2.0.7) on the mean contribution scores for each cell type, sampling 1,000,000 seqlets for both positively and negatively contributing seqlets (parameter -n 1,000,000), and using the default behaviour to search for seqlets in the central 400 bp of input regions. Each de novo motif is represented by a 4 × 30 CWM, computed as the mean contribution score per position per nucleotide across its seqlets, and a 4 × 30 position probability matrix (PPM), computed by normalizing the nucleotide frequencies per position across its seqlets. We manually inspected CWMs learned in each cell type, and the ChromBPNet models and motifs for ten cell types which predominantly learned low-complexity motifs were excluded from downstream analysis. This resulted in 189 cell types (945 models total) passing quality control and used throughout our study, which collectively learned 6,362 motifs including both positively contributing and negatively contributing motifs.

-

2)

Next, we automatically consolidated the 6,362 motifs into a non-redundant set. We first derived clusters of motifs which were broadly similar. CWMs were trimmed by removing positions where the total contribution across nucleotides was less than 30% of the maximum total contribution among all positions17. PPMs were trimmed to the same positions as the trimmed CWMs, and converted to position frequency matrices (PFMs) by multiplying PPMs by the total number of seqlets associated with each motif. PFMs from all cell types in each organ were first leniently clustered, separately for positive and negative motifs, using the gimmemotifs package109 (v0.18.0, with command gimme cluster -t 0.8), which returns an average PFM for each motif cluster. Average PFMs from across organs were then subjected to a second round of clustering with gimmemotifs. Within each broad motif cluster, we then collapsed the constituent CWMs originating from individual cell types using the SimilarPatternCollapser functionality in TF-MoDISco, which merges together similar motifs using the same method it does for seqlets during initial motif discovery in step (1). This procedure resulted in 834 motifs.

-

3)

Finally, we performed motif quality control, and annotated and categorized each motif. For annotation, we used TOMTOM110 (v4.11.2) to compute similarities between the 834 de novo motif CWMs and a curated set of 5,193 known transcription factor binding site position weight matrices (PWMs) from JASPAR, CIS-BP, and other studies (obtained from https://resources.altius.org/~jvierstra/projects/motif-clustering-v2.1beta/), using the command tomtom -no-ssc -oc.–verbosity 1 -text -min-overlap 5 -mi 1 -dist pearson -evalue -thresh 10.0. For every motif, we manually inspected the most similar PWMs to assign a provisional label, typically at the transcription factor family level. We further collapsed highly similar motifs missed by the clustering approach, retaining the motif with the highest number of seqlets across cell types. We flagged 107 motifs which were low-complexity, noisy, or dominated by CG dinucleotides for exclusion. We categorized motifs as ‘base’ if they matched known PWMs; ‘base with flanks’ if they matched known PWMs but exhibited additional high-contribution nucleotides; and ‘homocomposite’ or ‘heterocomposite’ if the motifs clearly matched two similar or distinct known motifs, respectively. Motifs that did not resemble known PWMs were labelled as ‘unresolved’. After exclusion of low-quality motifs, this resulted in a set of 508 non-redundant motifs used for downstream analysis (Supplementary Table 6). Motif labels have the naming scheme ‘<unique_ID > |<family > :<subfamily > /<alternative_subfamily > #<index > ’. The unique ID is a value from 0 to 508 (one ID corresponding to the low-quality motifs was filtered out). The index is used to distinguish separate motifs which each match the same known motif, typically representing subtle variations in nucleotide preferences or flanks. Composite motifs used a similar naming scheme, with labels for constituent motifs separated by underscores. Motifs were also assigned a broad label (for example, ‘CTCF’ and ‘CTCFupstream’ motifs shared a broad ‘CTCF’ label), used throughout figures and analyses to aggregate results.

Identification of predictive motif instances

To identify genomic instances of de novo motifs in each cell type, we used the Fi-NeMo package (https://github.com/kundajelab/finemo_gpu, v0.23, commit b81876d), which performs motif scanning in a contribution-aware manner. In brief, Fi-NeMo fits a sparse linear regression model for each peak region to minimize the difference between the contribution scores in each region, and a reconstruction of the scores from a weighted combination of trimmed input CWMs. In the coefficient matrix, a non-zero coefficient at a certain location indicates a motif instance in that location. For each cell type, the output of Fi-NeMo is a BED file of predictive instances across all peaks, representing short regions which both match motifs by sequence and have relatively high absolute contribution scores. We first ran Fi-NeMo with all 834 clustered CWMs, with parameters –alpha 0.8 –trim-threshold 0.3 and defaults otherwise (parameter alpha is now known as lambda). Due to the competitive nature of motifs in the linear modelling approach, we ran Fi-NeMo a second time with a reduced set of 436 motifs after excluding composite motifs. We performed post-hoc filtering of motifs to obtain a high-confidence annotation for downstream analysis. To evaluate the quality of instance calls for a given motif, Fi-NeMo computes the correlation between the input CWM and the CWM derived from averaging contribution scores for all Fi-NeMo-identified instances for that motif. For each cell type, instances from the two Fi-NeMo runs were concatenated, and motifs where correlation between the instance-CWM and the input CWM was less than 0.9 were flagged. Next, all instances of these flagged motifs were filtered out. Finally, to reduce redundancy, if multiple instances with the same annotation overlapped by more than 3 bp, only the instance with the highest ‘hit_correlation’ value was retained, representing the instance with the contribution scores having the highest similarity with the corresponding input CWM. This step resulted in final motif annotation for each cell type consisting of their predictive motif instances.

Inference of nucleosome positions from ATAC modality

We used NucleoATAC34 to infer nucleosome position and occupancy from the SHARE-seq ATAC modality data. In brief, to determine nucleosome occupancy, NucleoATAC models the observed size distribution of ATAC fragments as a mixture of nucleosomal and nucleosome-free fragments, and the maximum likelihood fraction of nucleosomal fragments at a given position is used as a continuous occupancy signal. We adapted the original code to take fragments as input (https://github.com/sjessa/NucleoATAC, v0.4.1), and ran the NucleoATAC workflow for each cluster pseudobulk fragment file in the same peak regions used to train ChromBPNet models. The outputs of the nucleoatac occ command were used downstream: the nucleosome dyad position calls were used for analysis of motif instances, and the per-nucleotide maximum likelihood fraction of nucleosomal fragments were used for visualization of nucleosome occupancy as genomic tracks.

Annotation of motif instances

To annotate predictive motif instances with respect to genomic features, instances were assigned as occurring in promoters if they were within 2 kb upstream or downstream of TSSs, exonic if they overlapped exons, intronic if they overlapped gene bodies but not exons, and distal otherwise. Genomic features definitions were based on the Bioconductor TxDb.Hsapiens.UCSC.hg38.knownGene (v3.14.0) annotation, corresponding to the UCSC knownGene track from GENCODE V38, and assembled using the createGeneAnnotation function in the ArchR package. Motif instance distance to TSS was computed as the distance between instance centre positions and TSS defined in the same annotation. Similarly, for each cell type, motif distance to the nucleosome dyad or peak summit was computed by calculating the distance between instance centre positions and the nearest dyad inferred with NucleoATAC, or the peak summit, respectively. For analysis, we counted motif instances in 10 bp bins from 0 to 250 bp from the dyad or peak summit.

Motif co-occurrence analysis

To identify co-enriched motifs, we tested for pairwise motif co-occurrence enrichment within the ChromBPNet training peaks for each cell type. We restricted the analysis to the set of base motifs within the de novo compendium, and grouped motifs by their broad annotations. For each motif pair, in each cell type, we populated a 2 × 2 contingency table with the number of peaks containing both, one, or neither motif. We then performed a one-sided Fisher’s exact test to calculate a P value for enrichment. To correct for multiple hypothesis testing across all pairs, we adjusted the P values using the Benjamini–Hochberg method. Significant co-occurrence was assigned for motif pairs with adjusted P values < 0.05.

In silico marginalizations to assess motif synergy

We used the trained ChromBPNet models to assess motif synergy following previous approaches19,42. In this in silico marginalization strategy, the predicted effect of a short sequence on chromatin accessibility is quantified by inserting the sequence into a library of background, non-accessible genomic regions (replacing the central nucleotides); and predicting accessibility for each background and edited region with a forward pass through a trained ChromBPNet model. By averaging the difference in predicted natural log counts between the edited and background regions over many regions, we estimate the ‘marginal’ effect of a sequence. Two or more sequences can be inserted to estimate joint effects of those sequences on accessibility. Specifically, for two motifs A and B, we define the predicted log counts for a region with one motif or both inserted as \(y_\rmA\), \(y_\rmB\) and \(y_\rmj\) respectively; and the predicted log counts for an unedited background region as \(y_\). The marginal effects, in log counts, of motif A and B are \(\Delta _\rmA=y_\rmA-y_\) and \(\Delta _\rmB=y_\rmB-y_\), respectively, and their joint effect is \(\Delta _\rmJ=y_\rmJ-y_\). We define the independent effects of motifs A and B as \(\Delta _\rmS=\Delta _\rmA+\Delta _\rmB\), corresponding to a log-additive model for independent effects, or multiplicative model in units of counts. Synergy can then be defined as a significant increase in the joint effects \(\Delta _\rmJ\) of two sequences relative to their independent effects \(\Delta _\rmS\).

To implement the synergy analysis, we used the tangermeme package41 (https://github.com/jmschrei/tangermeme, v0.4.3). We first filtered the set of de novo composite motifs such that each composite was composed of a unique pair of constituent motifs, obtaining 138 composite motifs. For each constituent, we identified the base de novo motif with the highest number of motif instances, trimmed the CWM as above, and defined the consensus sequence as the nucleotide with the highest contribution score at each position in the trimmed motif. The motifs and associated sequences tested are presented in Supplementary Table 7. Sequences were manually adjusted to further remove uninformative flanks or better match the composite motif, and deduplicated so that a pair of the same sequences was only tested once. One hundred background regions were randomly selected from GC-matched background regions for each cell type. For a pair of two of the same motifs, there are three unique orientations, and for a pair of two distinct motifs, there are four unique orientations. We considered each combination of orientation and distance between motifs an ‘arrangement’ of motifs. For each composite motif, the constituent motif sequences A and B were inserted at all possible orientations and distances (from 0 to 200 bp) in the 100 background sequences, and accessibility predicted for background and edited sequences using all five ChromBPNet model folds (for the cell type with the most predictive instances of that composite motif) to compute \(\Delta _\rmJ\) (Supplementary Note 3). Similarly, A and B were inserted alone to compute independent and sum of independent effects \(\Delta _\rmA\), \(\Delta _\rmB\), and \(\Delta _\rmS\). Effects are computed for each sequence and model fold and the mean effect is reported (Supplementary Table 7). For joint effects, the effect of the motif pair at their optimal arrangement (that is, the combination of orientation and distance with the greatest mean effect \(\Delta _\rmJ\)) is reported. We considered each motif pair at their optimal arrangement and used a Wilcoxon signed-rank test to test whether the paired differences in joint and independent effects at that arrangement (\(\Delta _\rmJ-\Delta _\rmS\)) were significantly greater than 0. Multiple testing correction was performed using the Benjamini–Hochberg method. Composite motifs with adjusted P value < 0.001 and \((\Delta _\rmJ-\Delta _\rmS)\) > 0.15 were annotated as synergistic. To confirm that inserted sequences were driving the predicted synergistic effects, we performed model interpretation using DeepLIFT as above on edited sequences, and verified that the sequences predictive of accessibility corresponded to the sequences we inserted. This identified 18 composite motifs where predicted effects were driven by different nucleotides than the ones inserted; and we abstained from classifying synergy for these motifs, resulting in 120 motifs with synergy classifications (Supplementary Table 7).

To define synergistic motifs with syntax preferences (that is, with synergy limited to or increased at specific binding site arrangements), for each composite motif, we computed z-scores across the joint effects \(\Delta _\rmJ\) at all arrangements. Composite motifs that had any arrangement with an effect greater than four standard deviations from the mean (z-score > 4) were annotated as having hard syntax. We also considered that motifs could have weaker long-range preferences, or soft syntax. Composite motifs with \((\Delta _\rmJ-\Delta _\rmS)\) > 0.15 at any arrangement where constituent motifs were between 20 and 150 bp apart were annotated as having soft syntax.

To validate the specificity of our predictions, we repeated the in silico marginalization experiments for the 138 motif pairs in all 189 cell types with ChromBPNet models passing QC (Supplementary Note 3). For each cell type, sequences were inserted into its respective background regions, and mean effects were computed across 100 sequences and 5 model folds. Effects were computed as above, and multiple testing correction was performed using the Benjamini–Hochberg method. To assess cell-type specificity of predicted synergistic effects, for select composite motifs, we analysed the in silico marginalization for the optimal arrangement across all cell types.

In silico motif ablations

Similarly, for select motifs, we implemented in silico ablations using tangermeme, using trained ChromBPNet models for one cell type (Heart_c3, fibroblasts). For each motif, we computed quartiles of the motif instances based on the Fi-NeMo hit correlation score, and randomly sampled 250 motif instances from each quartile (1,000 sequences total). To ablate motifs in silico, we ‘neutralized’ each instance by replacing the base at each position with the base that had the smallest absolute value contribution score in the hypothetical DeepLIFT scores—that is, the most neutral base. The hypothetical contribution scores are counterfactual estimates of the contributions of all three alternate bases at each position, had it been observed in the sequence context, and are computed using DeepLIFT as part of the ChromBPNet model interpretation workflow.

Ranking of motifs by prevalence and importance

To assess motif prevalence and importance, we focused on motifs learned in each cell type in the cell-type-specific TF-MoDISco motif discovery step, and only considered positive motifs. To compute motif prevalence, for each cell type, we obtained the set of motifs learned in that cell type, and the number of motif instances in that cell type for the corresponding lexicon motifs. We ranked motifs within the cell type based on their number of instances, and normalized ranks by dividing each rank by the maximum rank in that cell type such that normalized ranks fell in the range [0, 1]. Similarly, to compute motif importance, for each cell type, we obtained the set of motifs learned in that cell type and summed the contribution scores across nucleotides and positions for the corresponding trimmed CWM. Finally, to compare prevalence and importance of different classes of motifs defined in the synergy analysis, we grouped motifs based on whether they had hard syntax, soft syntax, no predicted synergy, or were not tested (meaning the motif was not a composite motif, or filtered out of the synergy analysis as described above). Motifs with both hard and soft syntax were grouped with hard syntax motifs. We then computed the mean normalized rank across motifs in each group, within each cell type.

Motif footprinting

For select broad motifs (CTCF, ZEB/SNAIL, NFY, the NFY negative motif, YY1/2 and the YY1/2 negative motif), we used our deep learning-derived motif instances, filtered to the most common variant of the motif, and then split instances into quartiles based on the Fi-NeMo hit correlation score. To compute motif footprint metaplots from the SHARE-seq ATAC modality, we used the BPCells footprint function, which counts insertions (that is, fragment starts and ends) that fall within each position in 500-bp windows centred at motif instances. The insertion counts at each position are then divided by the mean insertion count in the outer 10% on each side of the window, taken as a measure of the local background accessibility.

Enrichment of eQTL variants in motifs

We obtained tissue-specific GTEx v8 eQTL data54 and concatenated them by organ source to match our fetal organs, aggregating the tissues as follows: adrenal gland, brain (amygdala, anterior cingulate cortex BA24, caudate basal ganglia, cerebellar hemisphere, cerebellum, cortex, frontal cortex BA9, hippocampus, hypothalamus, nucleus accumbens basal ganglia, putamen basal ganglia, spinal cord cervical c-1, substantia nigra), heart (artery aorta, artery coronary, atrial appendage, left ventricle), lung, liver, muscle (artery tibial, skeletal), skin (not sun exposed suprapubic, sun exposed lower leg), spleen, stomach/oesophagus (stomach, oesophagus gastro-oesophageal junction, oesophagus mucosa, oesophagus muscularis) and thyroid. We obtained unique lists of variant-gene pairs per organ by selecting the variant with the higher posterior inclusion probability score in case of duplicates.

For each organ, the log2 allelic fold change effect sizes (aFCs) for each variant-gene pair was used to determine the direction of variant effect on gene expression (upregulating or downregulating expression). Separately for each organ, we concatenated all motif instances from each cell type, then deduplicated entries with identical motif names and genomic positions. The number of upregulating and downregulating variants that overlapped positive or negative motif instances from the matched fetal organ were counted separately to obtain observed counts. aFCs were then randomly shuffled and direction of effect reassigned before the counting was repeated. We performed 100,000 shuffles per organ. Enrichment scores were defined as observed counts divided by the mean of the 100,000 shuffled counts. Enrichment P values were calculated as the proportion of shuffles where shuffled counts were larger than observed counts. Multiple testing correction using the Benjamini–Hochberg method was applied to the P values and a motif was considered significantly enriched with upregulating or downregulating variants if the FDR was <0.05 and the observed count was above the 95% confidence interval of shuffled counts for that type of eQTL variant. Two-sided Fisher’s exact tests were performed separately for positive and negative motifs to compare the number of motifs with or without significant enrichment with upregulating or downregulating eQTL variants (Supplementary Table 8).

Enrichment of cell types with disease variants using g-chromVAR

We used g-chromVAR60 to compute cell-type-specific enrichment of disease variants. As all genetic variants in CAUSALdb59 are reported in hg19 coordinates, ChromBPNet model input peak sets from fetal cell types were lifted over from hg38 to hg19 coordinates, and these were used as input to g-chromVAR. All coordinate conversions were performed using liftOver111 as implemented in the rtracklayer (v1.54.0) R package112 and the hg38ToHg19.over.chain.gz or hg19ToHg38.over.chain.gz chain files obtained from the UCSC Genome Browser. SuSiE scores for each variant listed in CAUSALdb were separately collated for all 13,710 studies in the database. CAUSALdb contains variants where linkage disequilibrium information was missing in some GWASs, which affects the causal variant estimation in the process of fine-mapping. These variants were given default PIP values of −1 in the database and were removed from our analysis. We separately ran g-chromVAR (v0.3.2) for each organ to obtain z-scores and P values of cell type enrichments with credible variants per study. We used the PIP values generated by SuSiE69 and filtered out studies where the sums of the PIP values across credible variants in that study were <5 to remove studies wherein we would be underpowered, retaining results from 13,194 studies. Multiple testing correction using the Benjamini–Hochberg method was applied to all P values and a cell type was considered significantly enriched with variants from a particular study if the FDR was <0.05. We next manually extracted the subset of the significant results corresponding to disease traits relevant to the organs in HDMA (see Supplementary Table 10 for the list of studies). Potentially causal variants (SuSiE PIP ≥ 0.8) were lifted over from hg19 to hg38 coordinates and overlapped with fetal motif coordinates. For each fetal cell type in our dataset, we then manually identified matching adult tissues and cell types with snATAC–seq data in ENCODE, and obtained their snATAC–seq pseudoreplicated peak sets from ENCODE70 (Supplementary Table 12). Causal variants overlapping fetal motif instances were subsequently overlapped with the relevant adult peak sets (peaks with −log10 P value > 2) if available. Only variants found in fewer than two matched adult peak sets were considered as ‘fetal-only’ hits.

Prediction of variant effect using ChromBPNet models

We predicted and interpreted effects of specific non-coding variants on chromatin accessibility using trained ChromBPNet models, as we have done previously7. We used the tangermeme package for predictions and model interpretation. For each variant, we used the 1,000 bp model training peaks for the relevant cell type to extract the reference genome sequence for the peak which the variant overlapped. This sequence (extended equally on either side to 2,114 bp) was fed to all fivefold trained ChromBPNet models for the relevant cell type, to obtain predicted accessibility profile and aggregate log counts in the peak. For each fold, to transform predicted profile logits into accessibility profiles, the profile logits were softmaxed and scaled by the exponentiated predicted log counts; and model interpretation with respect to the counts output was performed using DeepLIFT. Next, the effect allele was substituted into the sequence at the variant position, and predictions and contribution scores were obtained as for the reference sequence. For each model, we computed the variant effect as the sum of differences in per-base predicted read counts in the 100 bp window centred at variant, and computed the mean effect score across folds. We also computed the log2 fold change between predicted counts for the effect versus the non-effect allele for the peak region, where a log2 fold change >0 indicates the effect allele was predicted to increase accessibility. In figures, the mean predicted profiles and contribution scores across folds are shown.

Genome browser visualizations

For all data visualization at specific genomic loci, we used the BPCells R package113 (https://github.com/bnprks/BPCells) along with custom scripts included in our code repository. All genomic tracks are hosted online for interactive visualization with the WashU Genome Browser (https://epigenomegateway.wustl.edu/browser2022/?genome=hg38&hub=https://human-dev-multiome-atlas.s3.amazonaws.com/tracks/HDMA_trackhub.json).

Statistics and reproducibility

No statistical method was used to predetermine sample size. No data were excluded from the analyses. The experiments were not randomized. The investigators were not blinded to allocation during experiments and outcome assessment. For box plots throughout the figures, the elements represent the following: centre line, median; box limits, upper and lower quartiles; whiskers, minima and maxima that are no further than 1.5× interquartile range.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.