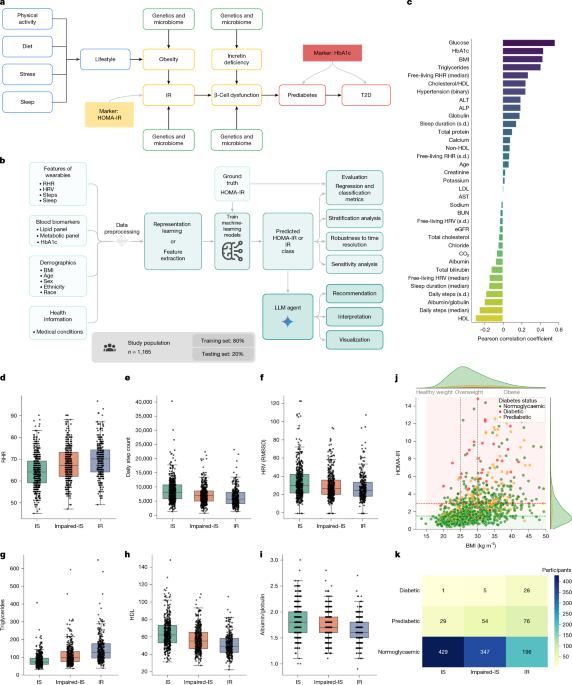

Remote recruitment of participants through the GHS platform

The study protocol was approved by Advarra (IRB no. Pro00074093). Participants were recruited using the Google Health Services (GHS) and the Fitbit applications. GHS is a platform for running digital studies that allows participants to enrol, check eligibility and provide informed consent. GHS enables the collection of data from wearables (Fitbit devices or Pixel watches) and allows participants to complete questionnaires and order blood tests through Quest Diagnostics. In addition to the consent, participants were asked to sign the Quest HIPAA authorization as part of the consent flow within the GHS app. This study resulted in the enrolment of 4,416 participants in the USA, a subset of whom (n = 1,165) had complete data and were therefore included in our analysis. The study was performed remotely with one visit to a Quest Patient Service Center for a blood draw. Participants were asked to wear their wrist-worn devices continuously, schedule and complete a blood draw and answer questionnaires.

Inclusion criteria included: participants residing in the USA aged between 21 and 80; Android Fitbit users who wear a Fitbit device or a Pixel watch with heart-rate-sensing capabilities; users who have at least three months of existing data in which they have used the device for at least 75% of the days to track their activity and sleep; participants willing to update their Fitbit Android app; participants willing to install or update their GHS app on their Android phone; participants willing to link their Quest Diagnostics account to the GHS app (or create an account); participants able to speak and read English and provide informed consent and HIPAA authorization to take part in the study; and participants with access to and who are willing to go to a Quest Diagnostics location for blood draws.

Exclusion criteria included participants living in Alaska, Arizona, Hawaii and the US territories, because Quest Diagnostics cannot provide blood tests in those states; participants with uncontrolled disease (for example, a recent change in treatment in the past six weeks, awaiting review to trigger a change of treatment, a treating physician has indicated the condition is not yet controlled, or where symptoms of the condition are not responding to treatment); and participants with conditions that might make collecting blood samples through venipuncture impractical.

Study design

As part of this study, participants were asked to link their Fitbit account with the GHS app. They were also asked to grant GHS permission to collect Fitbit data throughout the study, including data for up to three months before study enrolment. Once participants were enrolled in the study they were asked to do the following: (1) wear their Fitbit device or Pixel watch during the day and while they sleep (at least three out of every four days) for the duration of the study; (2) complete four questionnaires, which covered (i) demographic information, (ii) health history and health information, such as sleep and exercise habits, (iii) participant perception of health, and (iv) blood test interpretation (see below for details); (3) schedule an appointment to complete the laboratory test orders that were placed for them and go to a Quest Patient Service Center for a blood draw within 65 days of enrolment; (4) complete a blood draw at the Quest Patient Service Center; (5) review blood test results in the GHS app when available.

Collection of metadata

Demographics (for example, age, gender, ethnicity, weight and height), optional measures, such as medical conditions (for example, diabetes, hyperlipidaemia, cardiovascular disease, kidney disease, hypertension and so on), blood pressure, waist circumference, medications, self-reported health management and habits were collected through a baseline questionnaire that participants completed immediately after enrolment through the GHS app.

Blood biomarker measurements at Quest Diagnostics

Eligible participants were asked to schedule and complete a visit to a Quest Diagnostics Patient Service Center of their choice in their local area. This visit included a standard blood draw to measure the following: complete blood count, CMP, insulin, total cholesterol, triglycerides, HDL cholesterol, calculated LDL cholesterol, HbA1c, high-sensitivity CRP, hepatic panel, gamma-glutamyl transferase (GGT) and total testosterone. For this research study, we only had access to this predefined set of laboratory tests and did not collect or receive any blood test data not included in this study. Participants were asked to have their blood drawn while fasting for at least eight hours, in the early morning, 07:00–10:00 local time, to minimize the effect of the solar diurnal cycle. This study also had clinical oversight by a physician network partner. Laboratory results from the blood draw were returned to participants and made available for participant review in the GHS app for the duration of the study; however, these were pulled directly from Quest Diagnostics each time this was requested by the participant and were not stored in the GHS app. Participants provided consent and HIPAA authorization to grant GHS permission to collect the corresponding results from Quest Diagnostics. Data transferred from Quest Diagnostics were retrieved securely using encrypted protocols. Once the study was completed, laboratory results remained in the participant’s Quest Account in accordance with Quest’s standard practices.

Data from wearables (Fitbits and Pixel watches)

Participants were asked to wear their own Fitbit or Pixel watch, which can include sensors and metrics such as photoplethysmogram (PPG), gyroscope, altimeter, accelerometer, on-wrist skin temperature, blood oxygen saturation (SpO2) and ambient light sensor. Participants consented to share the following data from these devices: (1) heart-rate metrics: heart rate, RHR captured daily, interbeat interval (IBI, also known as RR interval) calculated from the PPG sensor, and HRV metrics (such as RMSSD, standard of normal-to-normal intervals, standard deviation of RR intervals, percentage of successive normal-to-normal heartbeats that differ by more than 50 ms, and so on); (2) physical activity metrics: steps, floors and active zone minutes (AZMs); (3) sleep metrics: bed time, wake time, sleep duration, sleep stages, sleep quality, sleep coefficients and sleep logs; (4) respiration and skin temperature: respiration rate values during the day and night and skin temperature (if the sensor is available on the wearable); (5) blood oxygen saturation (SpO2) values during the day and night (if available on the wearable); (6) weight: measure of weight that may be logged in the Fitbit account; and (7) exercise and activity data: daily total exercise sessions completed and logs of activities that have been classified. Fitbit daily RHR is calculated from periods of stillness throughout the day, as determined by the on-device accelerometer. If a person wears their device while sleeping, their sleeping heart rate is also included in the calculation. Daily RMSSD HRV is calculated from pulse intervals measured during sleep periods greater than three hours. AZMs is a feature that tracks the time a person spends in different heart-rate zones during physical activity. A person receives one AZM for every minute spent in the ‘moderate’ zone, and two AZMs for every minute spent in the ‘vigorous’ or ‘peak’ zones. The heart-rate zones are based on percentage of heart-rate reserve achieved, in which the heart-rate reserve is the difference between the maximum heart rate and the RHR. The moderate zone is defined as 40–59% of heart-rate reserve, vigorous as 60–84% and peak as 85–100%.

Selection of HOMA-IR thresholds for IR

Our selection of HOMA-IR thresholds was based on a previous study24, which reported a HOMA-IR range of 1.5 to 3 for IR. We chose a HOMA-IR threshold of 2.9, approximating the midpoint between the NHANES-derived threshold for the US population (HOMA-IR = 2.77) and the maximum value in the review (HOMA-IR = 3). For insulin sensitivity, we used the lowest threshold from the review, HOMA-IR = 1.55, which was rounded down to 1.5. Participants with HOMA-IR values between 1.5 and 2.9 were classified as impaired-IS.

Modelling and computational pipeline

Our method consists of four stages: (i) data preprocessing; (ii) modelling and training; (iii) prediction and classification of HOMA-IR; and (iv) LLM-based interpretation of the results. We describe each of these components, including evaluation strategies and large-scale ablation studies, below.

Data preprocessing

Age and BMI (demographics)

From the user-provided data on recruiting surveys, we extract a user’s age and compute BMI from height and weight. As a quality-control process, we exclude users with a BMI greater than 65, or lower than 12.

Digital markers derived from wearables (wearable features)

Using the estimated digital markers from Fitbit algorithms, we aggregated users’ digital markers using mean, standard deviation and median values for the past n = {7, 14, 30, 60, 60} days before blood test collection. We performed ablation studies to find the ‘optimal’ value of n (described later in this section).

Biomarkers from blood biochemistry (blood tests)

As a first filter, we removed any participant who was not fasting as reported in a survey at the time of blood test collection. In addition, for each experiment, we excluded participants with missing values from the input feature set. Lastly, to remove outliers, we used the true HOMA-IR value (HOMA-IR = (fasting insulin (µU ml−1) × fasting glucose (mg dl−1))/405) and excluded any participant whose HOMA-IR value was greater than or equal to 15.

Data standardization

The data used for modelling are a concatenation of demographics, aggregated digital markers and blood biomarkers. To create consistent modelling data that were agnostic to the learning model, we standardized input features to have zero mean and unit variance. For each training fold, our ‘normalizer’ object was fitted to the data in the training subset (not including samples in the testing subset). The fitted object was then used to transform the samples in both the training and the testing subset. The standardized data were used for all modelling tasks and evaluations.

To test the generalizability of our approach to the validation cohort, we used the same normalizer object that was fitted to the training data from the initial cohort.

Modelling

To determine the risk of IR, our goal was first to predict the value of HOMA-IR, and use existing thresholds for classifying individuals. Performing regression before classification allows for flexibility and much greater interpretation of our results and analysis of individual data points.

Direct regression

As our first approach in modelling HOMA-IR, we used gradient boosting machines; specifically, the XGBoost framework48,49. Gradient boosting methods excel in handling complex datasets with potentially non-linear relationships, making them particularly well-suited for our task. Compared with conventional tree-based methods, XGBoost provides computational efficiency, scalability and regularization techniques that enhance model generalization. Although it is often associated with tree-based models, XGBoost offers a versatile framework for making use of weak learners, which include both linear and tree-based learners. Owing to the ambiguity that surrounds the linear interaction between various input features, we used both linear (L1–L2) and non-linear (tree-based) learners to assess the complexity of the problem space.

Given n data-label pairs (xi, yi), gradient boosting can be viewed as an additive combination of K weak learners f, with the aim to predict target \({\hat{y}}_{i}\) through:

$${\hat{y}}_{i}=\mathop{\sum }\limits_{k}^{K}{f}_{k}({x}_{i}),{f}_{k}\in {\mathcal{F}}.$$

(1)

\({\mathcal{F}}\) denotes the space of all possible regression trees, \({\mathcal{F}}=\{f(x)={w}_{q}(x)\}\), with \(w\in {{\mathbb{R}}}^{T}\), where \(T\) is the number of leaves in the tree, and \(q:{{\mathbb{R}}}^{d}\to T\) represents the structure of each tree that maps d-dimensional data points (that is, \({x}_{i}\in {{\mathbb{R}}}^{d}\)) to the corresponding leaf index. In our notation, fk(xi) represents the prediction made by the k-th tree in the ensemble for the input sample xi. Although the choice of learners f can determine the linearity of the model, the number of trees and number of leaves serve as key hyperparameters that can further control model complexity. The objective of extreme gradient boosting machines is to minimize the loss function

$${\mathcal{L}}(\phi )=\mathop{\sum }\limits_{i}^{n}l({\hat{y}}_{i},{y}_{i})+\mathop{\sum }\limits_{k}^{K}\varOmega ({f}_{k}),$$

(2)

where \(l(\,{\hat{y}}_{i},{y}_{i})\) represents the (traditional gradient boosting machines) loss function measuring the discrepancy between the predicted output \(\hat{{y}_{i}}\) and yi, and the regularizer Ω(fk) penalizes model complexity, where \(\varOmega =\gamma T+\frac{1}{2}\lambda \Vert w{\Vert }^{2}\), with \(\gamma \) and \(\lambda \) being hyperparameters.

In our work, the first set of models use weak linear learners that can capture additive linear relationships within the data. This approach assumes that the target variable can be modelled as a linear combination of the input features, potentially revealing important feature interactions (this is obtained by using gblinear from the XGBoost implementation50). Recognizing the potential for complex non-linear relationships within our data, we let the second set of models be non-linear (through gbtree in the XGBoost implementation). XGBoost’s efficient tree construction algorithm, based on a pre-sorted split finding algorithm and sparsity-aware split finding, allows for faster training and exploration of a larger parameter space. This, coupled with the inherent ability of decision trees to model non-linear interactions, make the tree-based approach a powerful technique for uncovering potential complex patterns in our study.

Representation learning

Although direct regression methods, such as XGBoost, can effectively handle high-dimensional data, we hypothesized that their performance can be further amplified by providing informative and concise data representations. Studies have demonstrated the importance of mathematical representation of personal health records (blood tests, lifestyle data and so on) for various downstream tasks51. To test the representation learning hypothesis, we used representation learning techniques (specifically, MAEs), potentially learning latent representations that could enhance regression performance.

MAE

Although simple autoencoders (AEs) have been shown to be an effective unsupervised approach for representation learning, MAEs52, a self-supervised variant of AEs, have shown tremendous improvements over conventional AEs, achieving state-of-the-art results across different benchmark datasets52,53. For our approach, given a datapoint \({x}_{i}\in {{\mathbb{R}}}^{d}\), we start by drawing a masking vector \(m\in {{\mathbb{R}}}^{d}\) from a multivariate Bernoulli distribution with probability p (determined through ablation studies, as discussed later). Using the mask vector, we generate a masked version of the input, \(\hat{{x}_{i}}=x\odot m\), which is then inputted to the MAE model. Similar to the conventional AE, the objective of our network is to reconstruct the initial input, but from the masked vector \({\hat{x}}_{i}\) as opposed to xi. Therefore, our object for the MAE can be expressed as:

$${{\mathcal{L}}}_{{\rm{AE}}}(x)={{\mathbb{E}}}_{x}\parallel {\rm{Dec}}({\rm{Enc}}(x\odot m))-x{\parallel }^{2}+\lambda \cdot {\rm{SL}}(x,{\rm{Dec}}({\rm{Enc}}(x\odot m))).$$

(3)

Model training and HOMA-IR prediction from learned representations

The results presented for regression in this study include two stages: (i) representation learning and (ii) using the learned representation to train a XGBoost with linear learners for predicting HOMA-IR values. We chose using linear XGBoost models for the second stage to better showcase the improvements made possible by our representation learning approach.

We trained both models for a total of 500 epochs with the smooth L1 loss coefficient λ = 0.01. For optimization, we used the Adam optimizer with (β1, β2) = (0.9, 0.999) and ϵ = 10−12. The initial learning rate was set to lr = 0.001, scheduled to decrease exponentially (γ = 0.95) after 100 epochs on a plateau with a patience of 20 epochs. For the MAE model, we set the masking probability p = 0.75 based on our empirical results (found through grid search) and existing literature.

Parameter selection

To identify the set of parameters used for the ablation studies, we performed grid search for key parameters. For all models, because of grid search’s exponential computational complexity, we performed grid search on a common feature set and time window with fivefold cross-validation to identify optimal hyperparameter values. We describe the parameter grid for each model in Supplementary Table 16. To make our experiments deterministic, our random seed was set to [0, 92, 1, 2024, 12121].

WFMs

Recent years have seen the rise of ‘foundation models’, whereby large-scale pretraining on diverse—and often unlabelled—data has led to representations that are useful for a breadth of downstream applications27,28. To evaluate the utility of such large-scale pretraining on the task of IR prediction, we used the large sensor model (LSM)54,55, a foundation whose embeddings have shown utility for a number of health downstreams, including activity recognition, mood classification, hypertension detection and anxiety detection. Specifically, for the IR prediction, we pretrain an LSM-2-style WFM on 40 million person-hours sampled at minute data resolution from Fitbit users. Each data sample contains 26 minutely aggregated features from a set of 5 sensors (photoplethysmography, accelerometer, skin conductance, altimeter and temperature) for a time span of 1,440 min (one day). The 26 features used as input for model embedding function features are described in Supplementary Table 17.

A core property of these data is that they have complex, structured missingness patterns. Missing data are ubiquitous in long-duration wearable sensor recordings, with 0% of samples over our entire dataset of 1.6 million instances of one-day data having 0% missingness. Missingness is also complex, originating from a variety of causes with varied structures. For example, large chunks of the signal can be missing if a sensor is temporarily off to save battery life; full missingness across all channels can occur in periods in which the device is not worn (off body); and short bursts of missingness can exist as a result of filtering out measurements that are clearly spurious or out of range.

In the pretraining objective, non-masked tokens are encoded before masked tokens are reintroduced for reconstruction in the subsequent decoder layers. Inputs are tokenized with a one-dimensional (1D) patch size of 10 min, resulting in 3,744 tokens (144 tokens per signal). This is implemented with a shared kernel across channels, using a two-dimensional (2D) positional embedding to encode time and signal identity. Then, the tokens are passed into ViT-1D with 25 million parameters, 12 encoder layers, with a 384-dimensional hidden size, and 4 decoder layers, with a 256-dimensional hidden size.

The pretraining procedure is performed on 8 × 16 Google v5e TPUs with a batch size of 512 for 100,000 steps. During pretraining, we include the inherited mask as well as a mix of artificial masking strategies. The inherited mask is on top of the pre-existing missingness inherent to the data, and the artificial mask is introduced onto present data to have ground truths for reconstruction loss. Our artificially masking mix seeks to model the real-world missingness patterns. The mix includes (1) 80% random imputation masking (to model noise), in which a random patch is masked; (2) 50% temporal slice masking (to model off body), in which all sensors at a random time point are masked; and (3) 50% signal slice masking (to model sensor off), in which all time points for a random sensor are masked. Each instance uses a randomly selected masking strategy with equal probability. Notably, we do not back-propagate on inherited mask tokens.

During evaluation, the pretrained model is then able to operate directly on incomplete multimodal sensor data by dynamically attending only to observed segments. This eliminates the need for external preprocessing, such as imputing or discarding missing values, and ensures generalization from pretraining to downstream deployment in real-world settings. In this way, to obtain our frozen embedding for the XGBoost head to be applied, we take the output of the missingness-agnostic encoded representation and take an average pooling across all unmasked tokens.

Independent validation cohort

The study protocol was approved by WCG (IRB no. 1371945). This validation cohort is part of a larger, 30-week longitudinal study designed to assess the effects of lifestyle modifications on cardiometabolic health metrics. Data collected throughout the study included anthropometric measurements (for example, BMI, waist circumference, skin tone and blood pressure), blood biomarkers (such as HbA1c, fasting glucose, fasting insulin and lipid panel), wearable-device data (Fitbit Charge 6, measuring heart rate, RHR, HRV, sleep duration and step count) and health and lifestyle questionnaires. Participants attended in-person appointments at the Fitbit Human Research Laboratory in San Francisco at the beginning and end of the study to complete the aforementioned measurements, tests and surveys. All participants were required to wear a Fitbit Charge 6 device continuously for a minimum of 20 h per day throughout the study.

The inclusion criteria of this study included: participants must be located in California, be at least 18 years of age, be able to stand and walk without aid, be willing to provide informed consent, be comfortable with English instructions, own and use a smartphone, be willing to download and use the Fitbit app, be comfortable using wearable devices, have regular internet access and be willing to comply with study procedures. Exclusion criteria included: unwillingness to attend laboratory visits; having uncontrolled hypertension or recent changes in related medications; conditions making blood draws impractical; known bloodborne pathogens; T1D; T2D with HbA1c > 8.0%; history of gastric surgery; non-iron-deficiency anaemia; severe mental illness; heart failure; undergoing dialysis; terminal illness; taking medications for diabetes or glucose control (with specific examples provided); taking oral steroids; using tanning lotions on arms, having wrist tattoos; skin conditions that interfere with devices; pregnancy or plans for pregnancy; recent radiological procedures with contrast agents; having non-permissible internal or irremovable external objects; weight greater than 450 lbs (204 kg); and unwillingness to participate in test protocols.

To start, 144 individuals were enrolled; 127 individuals had complete wearable-device data and 82 individuals had complete blood biomarker data acquired during an in-person visit at the end of the study. Ultimately, 72 individuals had both complete wearable-device data and complete physiological biomarker data, and this group was used as an independent validation cohort for the IR prediction model. For the validation, we used blood biomarkers from the final study time point to ensure the corresponding wearable-device data constituted a substantial representation of participants’ lifestyles. Wearable-device data encompassed all available records up to the final visit. Similarly, demographic data (age and BMI) were extracted from the final visit.

Evaluation of prediction and classification of HOMA-IR

Evaluation of regression

The first stage of our framework for predicting IR is to predict the continuous HOMA-IR values (regression). To evaluate the performance of each experiment, we stored the predicted HOMA-IR values in the test set of each fold, computing the average and standard deviation of mean absolute error across all test folds. To compute the over-concordance between our predicted values and the true HOMA-IR, we calculated the R2 on all predicted values, which includes the prediction for all individuals for when they were included in the test set of each fold.

Evaluation of IR classification

To classify an individual as insulin resistant, we use continuous HOMA-IR values (either predicted or true values for ground truth) and use the threshold of IR = 2.9 for binary classification. Individuals with a HOMA-IR value greater than or equal to 2.9 are considered to be insulin resistant, and are not insulin resistant otherwise. To evaluate our classification, we used the metrics specificity, sensitivity, precision, AUROC and area under precision-recall curve (AUPRC). Similar to the regression evaluation, we report the average and standard deviation of these metrics across each test fold for each experiment.

Time-dependent sensitivity and robustness analysis

To determine the robustness of our approach to time-dependant aggregation, we performed n-day aggregation of time series on a rolling window for the entire study duration. The goal of this analysis was to check the consistency (robustness) of our predictions for various time windows within the study. We report the consistency of predictions for all individuals for n = {7, 14, 30, 60}. We did not include 90 and 120 days, because these windows, maximally, would result in one or two windows over the study period. In addition, we compute the coefficient of variation of the predicted HOMA-IR values across the windows and report the results.

IR agent

LLMs are powerful tools for generating language, and have considerable potential to improve human–computer interactions across many domains, including education, clinical practice and interpretation of personal health metrics56,57. Given the known challenges for patients of understanding laboratory results and derived metrics, such as HOMA-IR, we aimed to develop an LLM-based framework for interpreting the results, as well as interacting with users for follow-up questions and recommendations.

Our proposed LLM-based agent is a ReAct (reasoning and acting) agent58 that is capable of multi-step reasoning and planning. ReAct agents are a class of LLM-based agents that synergistically combine internal reasoning with external actions to accomplish complex tasks. Unlike traditional LLMs, which mainly generate text, ReAct agents interleave textual reasoning traces (‘thoughts’) with calls to external tools or information sources (‘actions’) and reasoning over this multi-step process (‘observe’).

Our IR agent uses the ReAct iterative process to dynamically plan, gather necessary information (for example, from the web), adjust its strategy on the basis of retrieved facts or computed observations and ultimately respond to the incoming health-related query. The IR agent’s ‘thoughts’ provide interpretability and allow the agent to decompose complex problems, whereas its ‘actions’ provide grounding and interaction with the real world or specialized knowledge bases, overcoming the limitations of relying solely on the pretrained knowledge of the LLM. This interplay between internal deliberation and external interaction enables our agent to tackle tasks that require both reasoning and grounded knowledge acquisition in real time.

IR agent toolbox

A key part of our IR agent is its ability to intelligently select the set of tools it requires for answering incoming queries. The IR agent’s toolbox includes grounding tools (organic web search via Google Search), arithmetic tools, Python sandbox to execute code, as well as our HOMA-IR prediction models. These models and functions serve as a set of actions for our agent, ensuring accurate and deterministic computations that reduce the risk of error and hallucinations58. We describe the set of tools in Supplementary Table 18.

Implementation of the IR agent

We use Google DeepMind’s open-source OneTwo library59 to implement the IR agent. OneTwo is a Python framework specifically designed for facilitating research on prompting strategies for large foundation models. Crucially, OneTwo’s flexible execution model supports the complex interaction patterns required by our IR agent, enabling the interleaving of LLM-generated reasoning (‘thoughts’) with external ‘actions’ such as web search or executing our trained machine-learning models. OneTwo’s built-in support for tool use and agent abstractions directly facilitated the development of an agent that is LLM-agnostic (that is, it can use other LLMs, not just models developed by Google).

Evaluation of the IR agent

To evaluate the IR agent, our goal was to measure the added benefit of including information about IR (as predicted by our machine-learning models) for metabolically relevant queries, when appropriate, compared with LLM responses that do not include this information. In addition, we wanted to assess the accuracy of the IR interpretation and comprehensiveness in these responses, as evaluated by endocrinologists (human experts).

Set-up of the human expert evaluation

From our study, we selected five participants with atypical metabolic profiles, in consultation with a doctor who was not part of the evaluation team, to ask metabolically relevant questions.

These participants were selected from the following groups:

-

Individuals with clinically normal HbA1c, but classified as insulin resistant;

-

Individuals with obesity with a sedentary lifestyle (median steps of fewer than 8,000 and average steps of fewer than 8,000 steps per week) who were classified as being insulin resistant;

-

Individuals with obesity with an active lifestyle (average step count of 10,000 or more per day, computed on a weekly basis) who were classified as insulin resistant;

-

Individuals with obesity with an active lifestyle who were classified as insulin sensitive.

Using the data from these individuals, we then generated responses using our IR agent and the same base LLM at the core of our agent (Gemini 2.0 Flash); note that both models had access to the same data, which include demographics, blood biomarkers and wearable features, and were asked the same questions.

After generating answers to these questions from the IR agent and the base LLM, we recruited five practicing endocrinologists to evaluate each model response in a blind manner, in which the expert evaluators did not know which response was from the base model and which from the proposed IR agent, and the design or the scope of the IR agent was not shared with them to avoid any potential bias in the the ratings. More specifically, we asked the endocrinologists to evaluate the response in two manners:

Side-by-side comparison

This technique is commonly used in the field to measure the effect of variables (for example, inclusion or exclusion of certain features) in generated responses from similar models. The aim was to measure the benefits provided by our IR agent, including the addition of IR information, compared with the base LLM without access to the IR information and the specialized tool. We asked the expert evaluators to answer the following rubrics while considering responses side-by-side:

Absolute accuracy of the IR agent

In addition to the side-by-side comparisons, we aimed to evaluate the accuracy of the IR agent by itself across different clinically relevant dimensions60. Therefore, we asked the endocrinologists to rate the following ‘absolute’ rubric questions:

Statistical analysis

Statistical significance was determined using two-sided Wilcoxon rank-sum tests, with P values adjusted for multiple comparisons using the Benjamini–Hochberg method. Data processing, model training and evaluation were implemented in Python using numpy v.2.0.2, tensorflow v.2.19.0, scipy v.1.16.3, statsmodels v.0.14.6, sklearn v.1.6.1, shap v.0.50.0, xgboost v.3.1.2, torch v.2.9.0, pandas v.2.2.2, umap v.0.5.9.post2, pickle v.4.0, pytz v.2025.2, re v.2.2.1, tqdm v.4.67.1, IPython v.7.34.0, json v.2.0.9 and altair v.5.5.0.

Visualization methods

We used matplotlib v.3.10.0, seaborn v.0.13.2, bokeh v.3.7.3 and Figma to plot most of the figures.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.