Oak Ridge, Tennessee

The fastest supercomputer in the world is a machine known as Frontier, but even this speedster with nearly 50,000 processors has its limits. On a sunny Monday in April, its power consumption is spiking as it tries to keep up with the amount of work requested by scientific groups around the world.

The electricity demand peaks at around 27 megawatts, enough to power roughly 10,000 houses, says Bronson Messer, director of science at Oak Ridge National Laboratory in Tennessee, where Frontier is located. With a note of pride in his voice, Messer uses a local term to describe the supercomputer’s work rate: “They are running the machine like a scalded dog.”

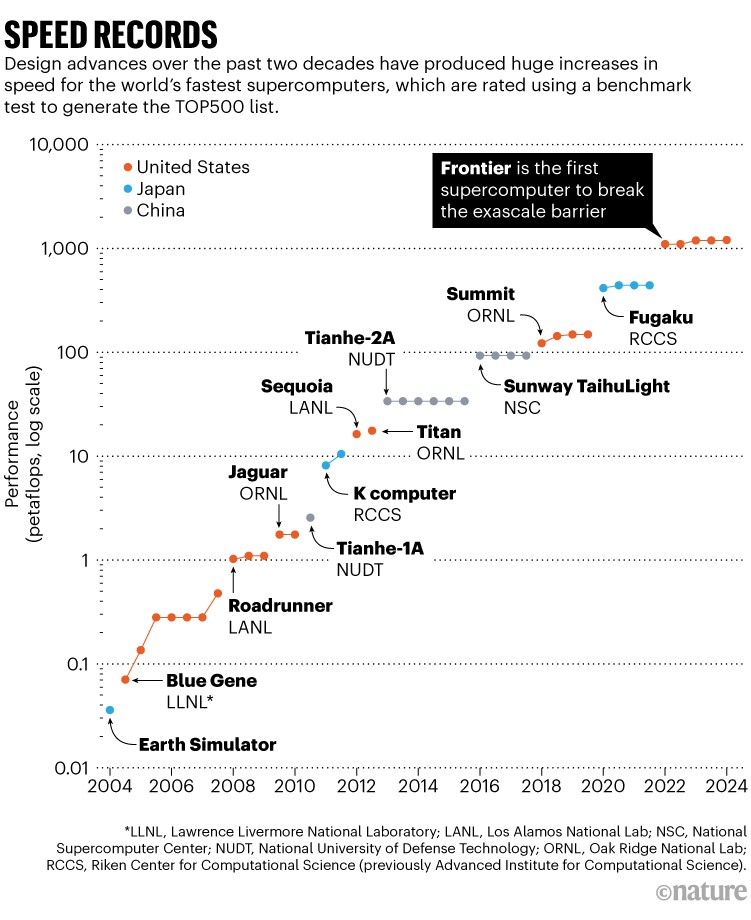

Frontier churns through data at record speed, outpacing 100,000 laptops working simultaneously. When it debuted in 2022, it was the first to break through supercomputing’s exascale speed barrier — the capability of executing an exaflop, or 1018 floating point operations per second. The Oak Ridge behemoth is the latest chart-topper in a decades-long global trend of pushing towards larger supercomputers (although it is possible that faster computers exist in military labs or otherwise secret facilities).

How cutting-edge computer chips are speeding up the AI revolution

But speed and size are secondary to Frontier’s main purpose — to push the bounds of human knowledge. Frontier excels at creating simulations that capture large-scale patterns with small-scale details, such as how tiny cloud droplets can affect the pace at which Earth’s climate warms. Researchers are using the supercomputer to create cutting-edge models of everything from subatomic particles to galaxies. Some projects are simulating proteins to help develop new drugs, modelling turbulence to improve aeroplane engine design and creating open-source large language models (LLMs) to compete with the artificial intelligence (AI) tools from Google and OpenAI.

Researchers log on to Frontier from all over the world. In 2023, the supercomputer had 1,744 users in 18 countries. And, in 2024, Oak Ridge anticipates that Frontier users will publish at least 500 papers based on computations performed on the machine.

“Frontier is not unlike the James Webb Space Telescope,” says biophysicist Dilip Asthagiri of Oak Ridge National Laboratory. “We should see it as a scientific instrument.”

Inside the machine

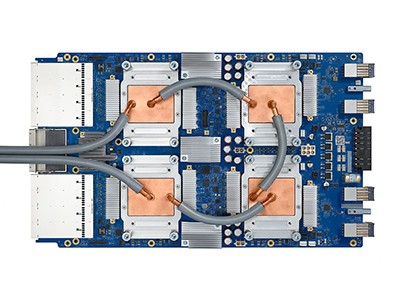

The brains of Frontier reside in a warehouse-sized room filled with a steady electronic hum that is gentle enough to talk over. In the room are 74 identical glossy black racks that hold a total of 9,408 nodes. These are the workhorses of a supercomputer. Each node consists of four graphics processing units (GPUs) and one computer processing unit (CPU).

A team of engineers continuously monitors the machine for signs of trouble, says Corey Edmonds, a technician at Hewlett Packard Enterprise, the company that built the supercomputer. Edmonds, who is based at Oak Ridge, is doing maintenance surgery on Frontier on this day. After fixing a broken connector on one of the nodes, he squeezes grey thermal grease from a syringe on to a silvery rectangle — one of the node’s four GPUs. This helps the GPU to dissipate heat quickly and stay cool.

Frontier owes its speed mainly to its extensive use of GPUs. These chips, first developed to render realistic graphics for computer gamers, are now powering advances in AI through machine-learning applications.

“They can run really fast,” says Messer. “They’re also abysmally stupid.” GPUs excel at crunching many numbers at once — and not much else. “They can do one thing over and over and over and over again,” he says, which makes them useful for speedy work in supercomputer calculations.

Researchers have to customize their code to take advantage of Frontier’s GPUs. Messer likens a scientist using Frontier for the first time to a suburban driver commandeering a race car. “It’s got a steering wheel, gas pedal and a brake,” he says. “But try to get a regular driver into a Formula One car and actually have them go from here to there.”

Big science

It’s not easy for researchers to get a chance to use Frontier. Messer and three colleagues are gathering on this April Monday to evaluate research proposals for the machine. On average, they approve around one in four proposals, and last year awarded time to 131 projects. In particular, applicants need to make the case that their project can take advantage of the supercomputer’s entire system.

The most common allocations they offer are around 500,000 node hours, equivalent to running the entire machine for three days continuously. Their largest allocation is four times bigger. Researchers who are granted time on Frontier get about ten times more computing resources than they can procure anywhere else, says Messer.

Today, his team is doling out smaller awards of around 20,000 node hours, which it does on a weekly basis. Many projects take advantage of Frontier’s ability to simultaneously model a wide range of spatial and time scales. In total, Frontier has about 65 million node-hours available each year.

Technicians work on Frontier, which has more than 50,000 processors and is cooled by water.Credit: Nick McGinn for Nature

Scientists want to use Frontier, for example, to simulate atomically accurate biological processes, such as proteins or nucleic acids in solution interacting with other parts of cells.

This May, Asthagiri and Nick Hagerty, a high-performance-computing engineer at Oak Ridge, used Frontier to simulate a cube-shaped drop of liquid water containing more than 155 billion water molecules. “It was to push the machine,” says Asthagiri. The simulated cube is about one-tenth the width of a human hair, and the model is among the largest atomic-level simulations ever made, says Asthagiri, who has not yet published the work in a peer-reviewed journal.

These initial simulations are building towards more ambitious goals to model entire cells from the atoms up. In the near term, researchers would like to simulate a cellular organelle and use these to inform laboratory experiments. They are also working to combine Frontier’s high-resolution simulations of biological materials with ultra-fast imaging using X-ray free-electron lasers to accelerate discoveries.

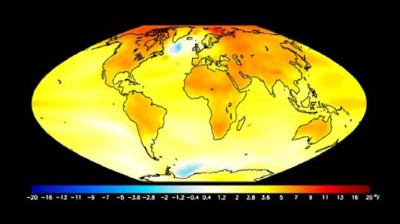

With Frontier, climate models have become more precise, too. In 2023, Oak Ridge climate scientist Matt Norman and other researchers used the supercomputer to run a global climate model with 3.25-kilometre resolution. Frontier’s computing capability was necessary for them to create a decades-long forecast at this resolution1. The model also incorporated the effects of the complex motion of clouds, which occurs on an even finer resolution. “It took all of Frontier to do it,” says Norman.

Models would run significantly more slowly on other computers to achieve the same resolution while including the effects from clouds, he says. This limitation is a major hurdle for climate scientists seeking to forecast conditions, because cloud behaviour influences the movement of energy around the globe.

Supercomputing poised for a massive speed boost

For a model to be practical for weather and climate forecasts, it needs to run at least one simulated year per day. Frontier could run through 1.26 simulated years per day for this model1, a rate that will allow the researchers to create more-accurate 50-year forecasts than before.

Frontier also brings higher resolution to cosmological scales. Astrophysicist Evan Schneider at the University of Pittsburgh in Pennsylvania is using the supercomputer to study how Milky Way-sized galaxies evolve as they age. Frontier’s galaxy models span four orders of magnitude, up to large-scale galactic structures about 100,000 light years (30,660 parsecs) in size. Before Frontier, the largest structures she could model at comparable resolution were dwarf galaxies, which are about one-fiftieth the mass.

Schneider simulates how supernovae cause gas to leak out of these galaxies2. Over time, thousands to millions of supernova explosions collectively release a significant amount of gas that ultimately exits the galaxy3. Because that gas is the raw material from which new stars are born, star formation slows down as the galaxies age. Frontier allows Schneider to include the effects of hotter gas than is practical with other computers. Her simulations suggest that current cosmological models downplay the role of this hot gas in the evolution of galaxies.

AI researchers are also clamouring for time on Frontier’s GPUs, known for their role in training neural-network-based architectures such as the transformer model underpinning ChatGPT. With its nearly 38,000 GPUs, Frontier occupies a unique public-sector role in the field of AI research, which is otherwise dominated by industry.

Nur Ahmed, an economics researcher now at the University of Arkansas in Fayetteville, and his colleagues highlighted the gap between AI in academia and industry in a commentary last year4. In 2021, 96% of the largest AI models came from industry. On average, industry models were nearly 30 times the size of academic models. The discrepancy is evident in monetary investment, too. Non-defence US agencies provided US$1.5 billion to support AI research in 2021. In that same year, industry spent more than $340 billion globally.

Mind the gap

The gap has only widened since the release of commercial large language models, says Ahmed. The computational resources to train OpenAI’s GPT-4, for example, cost an estimated $78 million, whereas Google spent $191 million to train Gemini Ultra (see go.nature.com/44ihnhx). This gulf in investment leads to a stark asymmetry in the computing resources available to researchers in industry versus academia.

Industry is pushing the boundaries of basic AI research, and this could pose a problem for the field, write Ahmed and his co-authors. Industry dominance could lead to a lack of basic research that is not immediately profitable and result, for example, in the development of AI technologies that neglect the needs of lower-income communities, say the researchers. In an unpublished study, Ahmed has analysed 6 million peer-reviewed articles and 32 million patent citations and found that “on average, industry tends to ignore some of the concerns of marginalized populations in the global south”.

Climate scientists push for access to world’s biggest supercomputers to build better Earth models

What’s more, many models have problems with gender and racial bias, as found in several commercial face-recognition systems based on AI. Academics could serve as auditors to evaluate the risks from AI models, but to do so they need access to computational resources at the same scale as industry, says Ahmed.

That’s where Frontier comes in. Once Oak Ridge approves a project application, the researcher uses the supercomputer for free, as long as they publish their results. That will help university researchers to compete with companies, says computer scientist Abhinav Bhatele at the University of Maryland in College Park. “The only way people in academia can train similar-sized models is if they have access to resources like Frontier,” he says.

Bhatele is using Frontier to develop open-source LLMs as a counterbalance to industry models5. “Often when companies train their models, they keep them proprietary, and they don’t release the model weights,” says Bhatele. “With this open research, we can make these models freely available for anyone to use.” Over the next year, he and his team aim to train a range of LLMs of different sizes, and they will make these models, along with their weights, open-source. They have also made the software for training the models freely available. In this way, says Bhatele, Frontier has a crucial role in a movement in the field to “democratize” AI — to include more people in the technology’s development.

The race continues

A few doors down from the room housing Frontier, its predecessor is still working hard performing jobs for scientists around the world. This machine, called Summit, held the world record for speed between 2018 and 2019 and is currently the world’s ninth-fastest supercomputer among public machines. With its long black chrome racks, Summit resembles Frontier, but has a louder cooling system and works at one-eighth the speed.

Summit’s history hints at Frontier’s future. Frontier first topped the list in 2022, and is likely to surrender that spot before long. The second-place supercomputer, Aurora, based at Argonne National Laboratory in Illinois, is expected to exceed Frontier’s performance at some point with further optimization. Lawrence Livermore National Laboratory’s El Capitan, scheduled to come online later this year at the California-based lab, is also projected to beat Frontier eventually. Also in the mix is Jupiter, an exascale supercomputer in Germany that is due to debut later this year.

Mounting geopolitical tensions further complicate the rankings. Frontier’s title comes from its position on a semiannual ranking from an organization called the TOP500. It rates the world’s supercomputers on the basis of their reported performance on a benchmark task that involves solving a dense set of linear equations.

But computing experts say it is likely that the United States and China are not sharing information publicly about their computing assets, especially because of increasing strain between the two countries. “There is this idea of a kind of a race in supercomputing,” says Kevin Klyman, a policy researcher at the Atlantic Council, a think tank in Washington DC. In fact, in 2022, the administration of US President Joe Biden implemented controls against exporting semiconductors to China, specifically citing concern about China’s supercomputing capability.

In the supercomputing arena, the tensions began years ago. Notably, in 2016, China overtook the United States in the number of supercomputers on the TOP500 list. “That caused a lot of anxiety in the United States,” says Klyman. “A lot of US policymakers said, ‘How do we catch up in the list?’”

Currently, the two countries have the most supercomputers on the TOP500 rankings released this June. The United States boasted 168 machines, whereas China had 80. Researchers wonder, however, whether these countries have powerful supercomputers that they have not disclosed in public. In fact, the number of Chinese machines on the current list has dropped since last November, when it included 104 machines. And China did not report results for any new supercomputers.

Oak Ridge is already planning Frontier’s successor, called Discovery, which should have three to five times the computational speed. It will be the latest in a decades-long quest for speed (see ‘Speed records’). Frontier is 35 times faster than Tianhe-2A, which was the fastest computer in 2014, and 33,000 times faster than Earth Simulator, the fastest supercomputer in 2004.

Source: www.TOP500.org

Researchers are eager for more speed. A bigger computer would allow Schneider, for example, to model galaxies at even higher resolution, she says. It could also give scientists bigger compute budgets.

But engineers face an ongoing challenge: supercomputers consume a lot of energy, and future machines are likely to need even more. So researchers are continuing to push for improvements in energy efficiency. Frontier is more than four times as efficient as Summit, in large part because it is cooled by water at an ambient temperature, unlike Summit, which uses chilled water. About 3–4% of Frontier’s total energy consumption goes to cooling, compared with 10% for Summit.

Energy efficiency has been a key bottleneck for building faster supercomputers for years. “We could have built an exascale supercomputer in 2012, but it just would have been way too expensive to power it,” says Messer. “We would have needed one or two orders of magnitude more power to be able to provide electricity to it.”

As evening settles at the Oak Ridge facility, the hallways on Frontier’s floor are empty save for a skeleton crew. In the supercomputer’s control room, Conner Cunningham is charged with babysitting Frontier for the night. From 7 p.m. to 7 a.m., his job is to make sure no trouble arises as the supercomputer churns through tasks from researchers around the world. He keeps an eye on Frontier using more than a dozen monitors, which display global cybersecurity threats and security-camera footage of the building. A television in the corner shows the local weather on mute, to alert him of any oncoming storms that might interrupt power supplies.

But most nights are quiet enough for Cunningham to study for an online computer science degree from his desk. He’ll perform a few walk-throughs to check for anything unexpected on the premises, but the job is largely passive.

“It’s kind of like with firefighters,” he says. “If anything happens, you need somebody watching.” He’s procured four burritos and some Pepsi to sustain him through his shift. He won’t be sleeping tonight — and neither will Frontier.