Design and fabrication

Photonic device and circuit-level simulations were performed using ANSYS Lumerical tools, whereas the integrated electronics followed a design flow using Cadence Virtuoso.

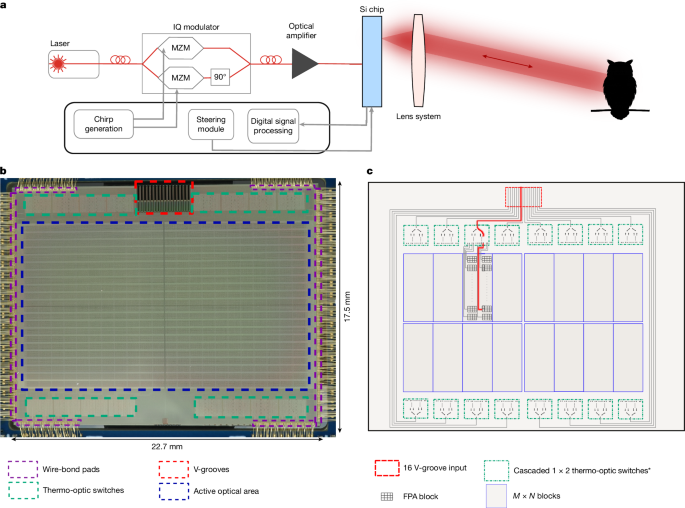

The finalized design was verified against the design rules using Cadence’s Physical Verification System (PVS). The demonstrated integrated monostatic FPA was fabricated using GlobalFoundries’ 45SPCLO 300-mm silicon photonics platform, which enables monolithic integration of photonic devices with 45-nm silicon-on-insulator RF CMOS electronics. Most of the photonic devices in the demonstrated FPA were based on the foundry’s standard process development kit but were further miniaturized to meet stringent footprint requirements, allowing the integration of 61,952 pixels. Several dies from different wafers were tested and no inoperative thermo-optic switches or dead pixels were observed; however, a mean of 42 out of 61,952 pixels showed noise greater than twice the mean over the entire array, leading to reduced SNR.

Loss budget

The FPA is supplied with FMCW light through 16 optical channels by means of a fibre ribbon. Each channel passes through a switch network before reaching its designated pixel row. This switch network consists of cascaded 1:2 thermo-optic switches, with the first five switching layers located outside the pixel array and an extra four layers integrated within each pixel block. The switch architecture introduces approximately 0.4 dB of loss per layer outside the array and 0.5 dB per layer within the pixel block, resulting in a total switching loss of around 4 dB. An extra insertion loss around 0.7 dB occurs at the V-grooves36, in which optical fibres are coupled to the chip.

A mean value of 426 μW per pixel is measured when 32 mW is delivered per optical channel. Nonlinear effects such as two-photon absorption and free-carrier absorption37 limit the power in silicon waveguides to approximately 16 mW. The extra 4-dB loss is attributed to a combination of two-photon absorption/free-carrier absorption and routing. Nonlinear losses can be eliminated by the use of advanced architectures combining efficient distribution of power in silicon waveguides with silicon nitride components and efficient routing.

Experimental set-up

The experimental set-up used for the measurement in Fig. 3 is presented in Fig. 4e. Frequency-modulated light at 1,310 nm is generated from a fixed frequency butterfly-packaged single-mode DFB laser (Innolume DFB-1310-PM-50-NL) with a linewidth of approximately 100 kHz. The infrared light from the seed laser is modulated using a silicon photonics IQ modulator. The modulated output then undergoes two-stage amplification using booster optical amplifiers (BOA-1310-50-PM-200mW).

To enable simultaneous operation of different sections of the 4D imaging sensor, the FMCW light is split into 16 fibres and coupled into the FPA through V-groove inputs. To ensure stability and optimal performance, all 16 polarization-maintaining optical fibres required for the full array must be precisely aligned and epoxied into the V-grooves38,39.

The lens systems used for imaging in Fig. 3 are the commercial lenses VS-3514H1-SWIR and VS-5018H1-SWIR from VS Technology with focal lengths f of 35 mm and 50 mm, respectively. They are mechanically attached to the imaging FPA by means of a 3D-printed adaptor, designed to place the lens at the proper working distance. The adaptor is mechanically screwed onto the carrier board. This configuration eliminates relative movements between the imaging FPA and the lens, therefore decreasing the sensitivity of the system to mechanical vibrations.

FPA emission characterization

To characterize the different grating couplers used in the FPA design, the far-field behaviour of dedicated test structures was measured using the knife-edge technique40. The normalized power emitted by a grating designed with a 10° emission angle in the back end of the chip, as a function of the blade position, is presented in Extended Data Fig. 1a. The blade is oriented perpendicular to the propagation direction of the light, as illustrated in Extended Data Fig. 1b.

The Gaussian beam widths at different distances from the chip were extracted by fitting the measurements with the knife-edge formula. The results are presented in Extended Data Fig. 1c for two test structures designed for 7.5° and 10° emission angles. Using the Gaussian beam propagation formula, the estimated beam waists are ω0,7.5° = 2.57 ± 0.01 μm and ω0,10° = 2.48 ± 0.01 μm, respectively. The corresponding half-angle divergences are θ7.5° = 0.165 rad and θ10° = 0.167 rad.

The emission angle and orientation of the grating emitters within the pixels vary depending on their location in the FPA. An orientation distribution similar to that reported in ref. 12 was used to maximize the optical transmission through the imaging lens. On the basis of Lumerical 3D FDTD simulations, the emission angles of the grating couplers were selected to range from 7.5° to 17.5°, increasing progressively with the distance of the emitter from the centre of the FPA. In this analysis, representative 7.5° and 10° gratings couplers were measured.

System imaging properties

The optical properties of the LiDAR system are a convolution of the FPA emission, which can be tuned with the use of a concave microlens deposited on-chip, and the optical lens system. The microlens serves two purposes. As shown in Extended Data Fig. 2a, one microlens per pixel was used to modify the out-of-plane emission angle of the grating couplers, to optimize transmission and FOV over the entire FPA. Simultaneously, the microlens increases the beam divergence of the grating couplers to exploit the entire aperture of the imaging lens. On the basis of Zemax simulations, the emission half-angle divergence of a single grating coupler designed for 7.5° has been increased from θ7.5° = 0.165 rad to θ7.5° = 0.237 rad on average.

The Gaussian-like profile of the emitted beam is shown in Extended Data Fig. 2b for one pixel close to the FPA centre, with (top) and without (bottom) the microlens, for a test imaging lens with f = 25 mm (VS-2514H1-SWIR from VS Technology) in a focusing configuration at around 11–12 m. The emission at the far edge of the lens is affected by aberrations owing to the high angle of incidence of the beam and only partial filling of the lens aperture. For imaging lenses with larger apertures, this phenomenon is strongly reduced.

The Rayleigh range of the 4D imaging system was determined by recording the emitted mode at different distances from the sensor with a SWIR camera (Goldeye G-008 SWIR from Allied Vision). The full width at half maximum of the imaged mode intensity distribution along the x,y axis, perpendicular to the light propagation direction, have been subsequently measured and the average Gaussian beam radius computed according to the formula \(w(z)=\sqrt\frac(w_x(z))^2+(w_y(z))^22\), in which \(w_x,y=\frac\rmFWHM_x,y\sqrt2\mathrmln(2)\).

The results of the measurement for the non-collimated imaging configuration are shown in Extended Data Fig. 2c. From the experimental fit for a Gaussian beam propagation, without the microlens, the system presents a Rayleigh range of 5.6 m and a Gaussian beam waist of 1.53 mm at the focal point, placed at 12.5 m from the FPA. The introduction of the microlens allows for a better filling of the lens aperture, leading to a smaller Gaussian beam waist of 1.31 mm at the focal point, placed at 11.1 m from the FPA, and a Rayleigh range of 4.1 m.

For imaging systems used in a collimated configuration, the Rayleigh range of the LiDAR system is determined by the aperture size of the lens, D. Their relation is described by the formula \(z_\rmR=\fracD^2\rm\pi 2\lambda \), in which D = 2ω0 and λ is the wavelength of the emitted light. Therefore, the use of a microlens would lead to a better filling of the aperture of the lens and to a corresponding increase in the Rayleigh range.

According to ray optics, the FOV of the system is related to the focal length f of the lens through the formula12 \(\rmFOV=2\tan ^-1\left(\fracs2f\right)\), in which s is the chip dimension. The theoretical angular resolution θres of the system is estimated as \(\theta _\rmres=2\tan ^-1\left(\fracp2f\right)\) (ref. 12), in which p is the pitch of the pixels.

The influence of the focal length f of the lens on the FOV and angular resolution of the LiDAR system is shown in Extended Data Fig. 3a–c. Point clouds of the same scene have been acquired using three lenses with focal lengths f = 25, 35 and 50 mm. As shown in the figure, the FOV is inversely proportional to the focal length of the lens used, decreasing from an initial value of FOV25mm = 44.44° × 26.65° to FOV50mm = 23.11° × 13.55°. An increase in focal length will also translate into a higher angular resolution of the FPA. The experimental minimum angular resolution was measured as the mean minimum angle between adjacent pixels, excluding the gaps. The measured FOVs and angular resolutions are in good agreement with the theoretical predictions, as summarized in the table reported in Extended Data Fig. 3d.

LiDAR control and electronics

All components of the LiDAR system (laser, IQ modulator, semiconductor optical amplifier, FPA) are mounted on custom-designed carrier boards, which interface by means of a motherboard. System control, chirp generation, signal acquisition and data processing are performed on the AMD Zynq UltraScale+ RFSoC (RF System-On-a-Chip) ZCU111 evaluation board. This integrates processor cores for control, an eight-channel ADC for signal acquisition, a two-channel digital-to-analogue converter (DAC) for chirp generation and programmable logic for digital signal processing.

The chirp for modulation is generated digitally on the field-programmable gate array using direct digital synthesis and the two quadrature signals are generated on the integrated 14-bit 6.5-GSPS DACs. The IQ modulator board amplifies these signals and tunes the modulator arms for single-sideband modulation. The chirp length is 32 μs up, 32 μs down and the total chirp bandwidth is 6 GHz. This sets the system range resolution to 25 mm using ΔR = c/2B (ref. 14).

Each thermo-optic switch arm on the FPA has an unknown phase offset. An automatic calibration routine is run through the RFSoC for the entire switch network. The voltage of each switch arm is swept and embedded photodiodes in the array measure the power at each output. The voltage corresponding to the maximum output is determined and stored by the RFSoC processor. To steer light to a specific position in the array, the prestored calibration values are set by a Serial Peripheral Interface to integrated DACs in the switching array. The switches settle in about 10 μs.

Data acquisition and signal processing

Each of the 16 input channels of the FPA can be illuminated simultaneously, resulting in up to 128 output signals from the chip, which supports a maximum of 20 fps at 100 μs per pixel. For a commercial product, this would require an application-specific integrated circuit to support this high frame rate. Although this is under development, the off-the-shelf RFSoC used at present supports eight acquisition channels. Thus, we acquired 8 pixels simultaneously and multiplexed all of the output channels to read the full array instead of using all 128 signals in parallel. Rows of 8 pixels are read consecutively, stepping through the entire array. The entire step of reading, processing and steering takes 130 μs. This allowed a maximum frame rate of 1 fps with real-time steering, acquisition, processing and data transfer to a PC. Further optimization of the hardware could improve acquisition speed and frame rate.

The output analogue signals are filtered and digitized by the 4,096-MSPS ADCs on the RFSoC. They are then decimated to 256 MSPS. The signals are acquired synchronously to the chirp. On the programmable logic, the samples are multiplied by a window function, a fast Fourier transform with a fixed length of 8,192 samples is performed and the signal magnitude calculated, all in fixed point. If selected, coherent averaging of several chirps is performed. The position of the highest peak is detected on the field-programmable gate array and passed to the processor. The peak frequency is then interpolated and the two chirps are combined into distance, amplitude and velocity. Although the hardware provides the possibility to measure several echoes, the results shown all use the strongest detected echo only. The data are then passed to a PC for display and storage by means of an Ethernet interface. All manually inspected amplitude spectra showed Fourier-limited signals, indicating a sufficiently narrow laser linewidth and sufficient chirp linearity for the measured distances.

Performance comparison

The performance of our 4D imaging system has been compared with a selection of LiDARs presented in the literature. The results are reported in Extended Data Table 1. The comparison includes studies focused on OPAs10,11,19,41,42,43, FPAs12,13,22, fully integrated single-pixel LiDARs16 and transceivers17. The comparison highlights not only system performance metrics such as FOV, maximum range, resolution and energy per pulse or up and down chirps but also focuses on the level of integration of the systems, in particular on the presence of a co-integrated transceiver on-chip as well as monolithic integration of CMOS electronics, including TIAs and photodetectors.

SNR model

A key driver of LiDAR performance is the number of photons received from the target. For a coherent monostatic pixel, the received optical power PRX can be modelled by44:

$$P_\rmRX=\eta _\rmp\,\rho (\rm\pi )P_\rmTX\frac\lambda ^2\rm\pi \omega ^2(z),$$

(1)

in which PTX is the transmitted optical power, λ the light wavelength and ω(z) is the Gaussian beam radius of the detecting beam at distance z from the emitter. The total losses of the system are described by the parameter ηp, which includes losses of the lens system, directional couplers and grating couplers. The constant ρ(π) represents the inverse steradian power reflectivity of the target, as defined in ref. 44. The received signal of optical power PRX mixes with the LO of optical power PLO and creates a beat signal with frequency f0 and amplitude given by:

$$ < I_\rmtarget > =2R_\rmPD\sqrtP_\rmLO\,P_\rmRX,$$

(2)

in which RPD is the responsivity of the photodetector.

The recombined optical power in the pixel creates shot noise on each photodetector, which can be modelled as white noise with the noise equivalent bandwidth Be:

$$ < I_\rmshot > ^2=2qR_\rmPD(P_\rmLO+P_\rmRX)B_\rme\approx 2qR_\rmPDP_\rmLO\,B_\rme.$$

(3)

An efficient detection of the signal frequency requires the signal peak power spectral density (PSD) to rise above the noise PSD. The SNR is defined experimentally by the ratio of the peak PSD of the target |S(f0)|2 to the mean PSD of noise <|S(fn)|2>. For a shot-noise-limited system, this is given by:

$$\rmSNR_\rmSN=\fracS(f_)^2S(f_\rmn)^2 > =\frac < I_\rmtarget > ^2/2 < I_\rmshot > ^2=2R_\rmPDP_\rmRXT/q=2N,$$

(4)

giving the well-known relationship between SNR and the number of detected photons N in the integration time T (ref. 44).

As shown in Fig. 4b, the presented imaging system is not shot-noise limited but contains further noise sources affecting the signal output, including laser frequency noise, TIA thermal noise and ADC noise. Among them, the thermal noise of the amplifier is dominant. Referring the noise sources to the input of the amplifier, the SNR of the imaging system is given by:

$$\rmSNR=\frac < I_\rmtarget > ^2/2 < I_\rmshot > ^2+ < I_\rmamp > ^2,$$

(5)

in which <Iamp> is the thermal noise of the amplifier. We define the ratio of shot noise to amplifier noise κ using:

$$\kappa ^2=\frac < I_\rmshot > ^2 < I_\rmamp > ^2.$$

(6)

The difference between the SNR of the imaging system and its expected SNR in the shot-noise-limited regime defines a SNR penalty as:

$$\rmSNR_\rmpenalty=\frac\rmSNR\rmSNR_\rmSN=\frac11+1/\kappa ^2.$$

(7)

We measured the noise ratio κ for each pixel in the array. First, amplifier noise was measured with no light. The mean amplitude in a single bin was measured over 100 acquisitions. Modulated light was then added and the total noise measured in the same way. Shot noise was estimated as \( < I_\rmshot^2 > = < I_\rmtot^2 > – < I_\rmamp^2 > \). The resulting distribution is shown in Fig. 4b, with a mean value of κ = 0.62. As shown in Fig. 4c, this results in a SNR penalty of −5.6 dB.

The dependence of the 4D imaging system’s SNR, as described in equations (5) and (1), on the optical imaging system parameters, particularly the resulting Gaussian beam waist and Rayleigh range, is shown for a single acquisition in Extended Data Fig. 4. The different curves have been obtained for the same optical emitted power PTX characterized in the main text, a single chirp T = 32 μs long and assuming RPD = 0.95 A/W, ηp = 0.23, PLO = 10.1 μW and a 20% reflectivity target. As illustrated, the distance at which the SNR decreases by 3 dB is directly proportional to the emitted Gaussian beam waist. If the beam waist is located at the output of the lens system, the SNR remains approximately constant until the fall-off distance, as shown in Extended Data Fig. 4a. However, for emitted modes with a smaller Gaussian beam waist focused beyond the output aperture of the imaging system, the SNR increases with distance, reaches a maximum at the focal point and then decays rapidly, as shown in Extended Data Fig. 4b. For beams that are well collimated over long distances, no marked change in SNR behaviour is observed over the designed range. In Extended Data Fig. 4c, experimental data acquired on a cardboard target are compared with the theoretical SNR model, computed for a single acquisition using the optical parameters of the 25-mm-focal-length lens shown in Extended Data Fig. 2c. The SNR uncertainty has been computed as the standard deviation over all pixels hitting the target. The data show a good agreement with the theoretical model for a distance up to 17 m from the sensor and a SNR around 1–3 dB larger than expected at longer ranges. This discrepancy could be attributed to the difference between the ideal Gaussian mode modelled and the experimental emitted mode.

LiDAR measurements

Measurements were made with the LiDAR system to determine SNR, detection probability and range precision. Figure 4d shows several stationary targets of calibrated reflectivity placed at 7.2 m. Each target is 10 cm across, corresponding to approximately 100 pixels on each target. To estimate the measurement precision, a LiDAR measurement of the scene was taken 400 times. Noise was measured in a single frequency bin with no target and averaged over all captures. The amplitude of the target signal was measured over all pixels falling on each target, averaged over all captures and scaled by the measured noise figure to give a SNR measurement. False returns giving an incorrect distance measurement were excluded from the calculation to prevent skewing the mean. The results are shown in Extended Data Table 2.

To remove false detections, an amplitude threshold Athresh is defined as four times the mean noise amplitude. Returns with a magnitude lower than this threshold are rejected as invalid points. The detection probability Pdet of a target is measured as the percentage of returns in which the amplitude exceeds the threshold. The false detection probability, Pf, corresponds to the percentage of returns exceeding the amplitude threshold but yielding incorrect distance information outside ±ΔR. The relationship between SNR from different targets and Pdet is shown in Extended Data Fig. 5 and compared with the model \(P_\rm\det =\exp (-A_\rmthresh^2/(1+\rmSNR))\) (ref. 45).

The distance and velocity precision are measured for each pixel on each target. The error of each measurement is calculated by the distance from the mean for each pixel, allowing for slanting of the target. The resulting error distributions of distance and velocity estimations are presented in Fig. 4f,g respectively. As target reflectivity increases, SNR, Pdet and precision all increase.